Search Results for author: Weisi Lin

Found 88 papers, 56 papers with code

Evolving Storytelling: Benchmarks and Methods for New Character Customization with Diffusion Models

no code implementations • 20 May 2024 • Xiyu Wang, YuFei Wang, Satoshi Tsutsui, Weisi Lin, Bihan Wen, Alex C. Kot

Additionally, to mitigate the character confusion of generated results, we propose EpicEvo, a method that customizes a diffusion-based visual story generation model with a single story featuring the new characters seamlessly integrating them into established character dynamics.

Enhancing Blind Video Quality Assessment with Rich Quality-aware Features

1 code implementation • 14 May 2024 • Wei Sun, HaoNing Wu, ZiCheng Zhang, Jun Jia, Zhichao Zhang, Linhan Cao, Qiubo Chen, Xiongkuo Min, Weisi Lin, Guangtao Zhai

Motivated by previous researches that leverage pre-trained features extracted from various computer vision models as the feature representation for BVQA, we further explore rich quality-aware features from pre-trained blind image quality assessment (BIQA) and BVQA models as auxiliary features to help the BVQA model to handle complex distortions and diverse content of social media videos.

Dual-Branch Network for Portrait Image Quality Assessment

1 code implementation • 14 May 2024 • Wei Sun, Weixia Zhang, Yanwei Jiang, HaoNing Wu, ZiCheng Zhang, Jun Jia, Yingjie Zhou, Zhongpeng Ji, Xiongkuo Min, Weisi Lin, Guangtao Zhai

We employ the fidelity loss to train the model via a learning-to-rank manner to mitigate inconsistencies in quality scores in the portrait image quality assessment dataset PIQ.

G-Refine: A General Quality Refiner for Text-to-Image Generation

1 code implementation • 29 Apr 2024 • Chunyi Li, HaoNing Wu, Hongkun Hao, ZiCheng Zhang, Tengchaun Kou, Chaofeng Chen, Lei Bai, Xiaohong Liu, Weisi Lin, Guangtao Zhai

Based on the mechanisms of the Human Visual System (HVS) and syntax trees, the first two indicators can respectively identify the perception and alignment deficiencies, and the last module can apply targeted quality enhancement accordingly.

LMM-PCQA: Assisting Point Cloud Quality Assessment with LMM

no code implementations • 28 Apr 2024 • ZiCheng Zhang, HaoNing Wu, Yingjie Zhou, Chunyi Li, Wei Sun, Chaofeng Chen, Xiongkuo Min, Xiaohong Liu, Weisi Lin, Guangtao Zhai

Although large multi-modality models (LMMs) have seen extensive exploration and application in various quality assessment studies, their integration into Point Cloud Quality Assessment (PCQA) remains unexplored.

AIS 2024 Challenge on Video Quality Assessment of User-Generated Content: Methods and Results

1 code implementation • 24 Apr 2024 • Marcos V. Conde, Saman Zadtootaghaj, Nabajeet Barman, Radu Timofte, Chenlong He, Qi Zheng, Ruoxi Zhu, Zhengzhong Tu, Haiqiang Wang, Xiangguang Chen, Wenhui Meng, Xiang Pan, Huiying Shi, Han Zhu, Xiaozhong Xu, Lei Sun, Zhenzhong Chen, Shan Liu, ZiCheng Zhang, HaoNing Wu, Yingjie Zhou, Chunyi Li, Xiaohong Liu, Weisi Lin, Guangtao Zhai, Wei Sun, Yuqin Cao, Yanwei Jiang, Jun Jia, Zhichao Zhang, Zijian Chen, Weixia Zhang, Xiongkuo Min, Steve Göring, Zihao Qi, Chen Feng

The performance of the top-5 submissions is reviewed and provided here as a survey of diverse deep models for efficient video quality assessment of user-generated content.

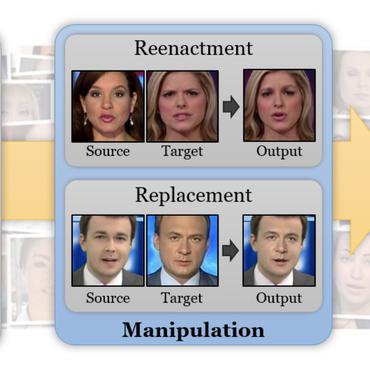

FakeBench: Uncover the Achilles' Heels of Fake Images with Large Multimodal Models

no code implementations • 20 Apr 2024 • Yixuan Li, Xuelin Liu, Xiaoyang Wang, Shiqi Wang, Weisi Lin

Therefore, we propose FakeBench, the first-of-a-kind benchmark towards transparent defake, consisting of fake images with human language descriptions on forgery signs.

NTIRE 2024 Challenge on Short-form UGC Video Quality Assessment: Methods and Results

1 code implementation • 17 Apr 2024 • Xin Li, Kun Yuan, Yajing Pei, Yiting Lu, Ming Sun, Chao Zhou, Zhibo Chen, Radu Timofte, Wei Sun, HaoNing Wu, ZiCheng Zhang, Jun Jia, Zhichao Zhang, Linhan Cao, Qiubo Chen, Xiongkuo Min, Weisi Lin, Guangtao Zhai, Jianhui Sun, Tianyi Wang, Lei LI, Han Kong, Wenxuan Wang, Bing Li, Cheng Luo, Haiqiang Wang, Xiangguang Chen, Wenhui Meng, Xiang Pan, Huiying Shi, Han Zhu, Xiaozhong Xu, Lei Sun, Zhenzhong Chen, Shan Liu, Fangyuan Kong, Haotian Fan, Yifang Xu, Haoran Xu, Mengduo Yang, Jie zhou, Jiaze Li, Shijie Wen, Mai Xu, Da Li, Shunyu Yao, Jiazhi Du, WangMeng Zuo, Zhibo Li, Shuai He, Anlong Ming, Huiyuan Fu, Huadong Ma, Yong Wu, Fie Xue, Guozhi Zhao, Lina Du, Jie Guo, Yu Zhang, huimin zheng, JunHao Chen, Yue Liu, Dulan Zhou, Kele Xu, Qisheng Xu, Tao Sun, Zhixiang Ding, Yuhang Hu

This paper reviews the NTIRE 2024 Challenge on Shortform UGC Video Quality Assessment (S-UGC VQA), where various excellent solutions are submitted and evaluated on the collected dataset KVQ from popular short-form video platform, i. e., Kuaishou/Kwai Platform.

AesExpert: Towards Multi-modality Foundation Model for Image Aesthetics Perception

1 code implementation • 15 Apr 2024 • Yipo Huang, Xiangfei Sheng, Zhichao Yang, Quan Yuan, Zhichao Duan, Pengfei Chen, Leida Li, Weisi Lin, Guangming Shi

To address the above challenge, we first introduce a comprehensively annotated Aesthetic Multi-Modality Instruction Tuning (AesMMIT) dataset, which serves as the footstone for building multi-modality aesthetics foundation models.

Fine Structure-Aware Sampling: A New Sampling Training Scheme for Pixel-Aligned Implicit Models in Single-View Human Reconstruction

1 code implementation • 29 Feb 2024 • Kennard Yanting Chan, Fayao Liu, Guosheng Lin, Chuan Sheng Foo, Weisi Lin

Lastly, to further improve the training process, FSS proposes a mesh thickness loss signal for pixel-aligned implicit models.

MISC: Ultra-low Bitrate Image Semantic Compression Driven by Large Multimodal Model

2 code implementations • 26 Feb 2024 • Chunyi Li, Guo Lu, Donghui Feng, HaoNing Wu, ZiCheng Zhang, Xiaohong Liu, Guangtao Zhai, Weisi Lin, Wenjun Zhang

With the evolution of storage and communication protocols, ultra-low bitrate image compression has become a highly demanding topic.

Towards Open-ended Visual Quality Comparison

no code implementations • 26 Feb 2024 • HaoNing Wu, Hanwei Zhu, ZiCheng Zhang, Erli Zhang, Chaofeng Chen, Liang Liao, Chunyi Li, Annan Wang, Wenxiu Sun, Qiong Yan, Xiaohong Liu, Guangtao Zhai, Shiqi Wang, Weisi Lin

Comparative settings (e. g. pairwise choice, listwise ranking) have been adopted by a wide range of subjective studies for image quality assessment (IQA), as it inherently standardizes the evaluation criteria across different observers and offer more clear-cut responses.

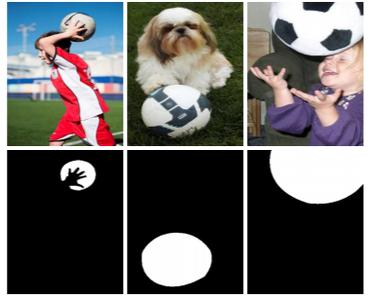

A Benchmark for Multi-modal Foundation Models on Low-level Vision: from Single Images to Pairs

1 code implementation • 11 Feb 2024 • ZiCheng Zhang, HaoNing Wu, Erli Zhang, Guangtao Zhai, Weisi Lin

To this end, we design benchmark settings to emulate human language responses related to low-level vision: the low-level visual perception (A1) via visual question answering related to low-level attributes (e. g. clarity, lighting); and the low-level visual description (A2), on evaluating MLLMs for low-level text descriptions.

AesBench: An Expert Benchmark for Multimodal Large Language Models on Image Aesthetics Perception

1 code implementation • 16 Jan 2024 • Yipo Huang, Quan Yuan, Xiangfei Sheng, Zhichao Yang, HaoNing Wu, Pengfei Chen, Yuzhe Yang, Leida Li, Weisi Lin

An obvious obstacle lies in the absence of a specific benchmark to evaluate the effectiveness of MLLMs on aesthetic perception.

Towards A Better Metric for Text-to-Video Generation

no code implementations • 15 Jan 2024 • Jay Zhangjie Wu, Guian Fang, HaoNing Wu, Xintao Wang, Yixiao Ge, Xiaodong Cun, David Junhao Zhang, Jia-Wei Liu, YuChao Gu, Rui Zhao, Weisi Lin, Wynne Hsu, Ying Shan, Mike Zheng Shou

Experiments on the TVGE dataset demonstrate the superiority of the proposed T2VScore on offering a better metric for text-to-video generation.

Q-Refine: A Perceptual Quality Refiner for AI-Generated Image

1 code implementation • 2 Jan 2024 • Chunyi Li, HaoNing Wu, ZiCheng Zhang, Hongkun Hao, Kaiwei Zhang, Lei Bai, Xiaohong Liu, Xiongkuo Min, Weisi Lin, Guangtao Zhai

With the rapid evolution of the Text-to-Image (T2I) model in recent years, their unsatisfactory generation result has become a challenge.

Q-Align: Teaching LMMs for Visual Scoring via Discrete Text-Defined Levels

1 code implementation • 28 Dec 2023 • HaoNing Wu, ZiCheng Zhang, Weixia Zhang, Chaofeng Chen, Liang Liao, Chunyi Li, Yixuan Gao, Annan Wang, Erli Zhang, Wenxiu Sun, Qiong Yan, Xiongkuo Min, Guangtao Zhai, Weisi Lin

The explosion of visual content available online underscores the requirement for an accurate machine assessor to robustly evaluate scores across diverse types of visual contents.

Ranked #1 on

Video Quality Assessment

on LIVE-FB LSVQ

Ranked #1 on

Video Quality Assessment

on LIVE-FB LSVQ

Q-Boost: On Visual Quality Assessment Ability of Low-level Multi-Modality Foundation Models

no code implementations • 23 Dec 2023 • ZiCheng Zhang, HaoNing Wu, Zhongpeng Ji, Chunyi Li, Erli Zhang, Wei Sun, Xiaohong Liu, Xiongkuo Min, Fengyu Sun, Shangling Jui, Weisi Lin, Guangtao Zhai

Recent advancements in Multi-modality Large Language Models (MLLMs) have demonstrated remarkable capabilities in complex high-level vision tasks.

Iterative Token Evaluation and Refinement for Real-World Super-Resolution

1 code implementation • 9 Dec 2023 • Chaofeng Chen, Shangchen Zhou, Liang Liao, HaoNing Wu, Wenxiu Sun, Qiong Yan, Weisi Lin

Distortion removal involves simple HQ token prediction with LQ images, while texture generation uses a discrete diffusion model to iteratively refine the distortion removal output with a token refinement network.

Enhancing Diffusion Models with Text-Encoder Reinforcement Learning

1 code implementation • 27 Nov 2023 • Chaofeng Chen, Annan Wang, HaoNing Wu, Liang Liao, Wenxiu Sun, Qiong Yan, Weisi Lin

While fine-tuning the U-Net can partially improve performance, it remains suffering from the suboptimal text encoder.

Q-Instruct: Improving Low-level Visual Abilities for Multi-modality Foundation Models

1 code implementation • 12 Nov 2023 • HaoNing Wu, ZiCheng Zhang, Erli Zhang, Chaofeng Chen, Liang Liao, Annan Wang, Kaixin Xu, Chunyi Li, Jingwen Hou, Guangtao Zhai, Geng Xue, Wenxiu Sun, Qiong Yan, Weisi Lin

Multi-modality foundation models, as represented by GPT-4V, have brought a new paradigm for low-level visual perception and understanding tasks, that can respond to a broad range of natural human instructions in a model.

You Only Train Once: A Unified Framework for Both Full-Reference and No-Reference Image Quality Assessment

1 code implementation • 14 Oct 2023 • Yi Ke Yun, Weisi Lin

When our proposed model is independently trained on NR or FR IQA tasks, it outperforms existing models and achieves state-of-the-art performance.

Ranked #1 on

No-Reference Image Quality Assessment

on LIVE

Ranked #1 on

No-Reference Image Quality Assessment

on LIVE

Q-Bench: A Benchmark for General-Purpose Foundation Models on Low-level Vision

1 code implementation • 25 Sep 2023 • HaoNing Wu, ZiCheng Zhang, Erli Zhang, Chaofeng Chen, Liang Liao, Annan Wang, Chunyi Li, Wenxiu Sun, Qiong Yan, Guangtao Zhai, Weisi Lin

To address this gap, we present Q-Bench, a holistic benchmark crafted to systematically evaluate potential abilities of MLLMs on three realms: low-level visual perception, low-level visual description, and overall visual quality assessment.

IBVC: Interpolation-driven B-frame Video Compression

1 code implementation • 25 Sep 2023 • Chenming Xu, Meiqin Liu, Chao Yao, Weisi Lin, Yao Zhao

Learned B-frame video compression aims to adopt bi-directional motion estimation and motion compensation (MEMC) coding for middle frame reconstruction.

Local Distortion Aware Efficient Transformer Adaptation for Image Quality Assessment

no code implementations • 23 Aug 2023 • Kangmin Xu, Liang Liao, Jing Xiao, Chaofeng Chen, HaoNing Wu, Qiong Yan, Weisi Lin

Further, we propose a local distortion extractor to obtain local distortion features from the pretrained CNN and a local distortion injector to inject the local distortion features into ViT.

Efficient Joint Optimization of Layer-Adaptive Weight Pruning in Deep Neural Networks

2 code implementations • ICCV 2023 • Kaixin Xu, Zhe Wang, Xue Geng, Jie Lin, Min Wu, XiaoLi Li, Weisi Lin

On ImageNet, we achieve up to 4. 7% and 4. 6% higher top-1 accuracy compared to other methods for VGG-16 and ResNet-50, respectively.

TOPIQ: A Top-down Approach from Semantics to Distortions for Image Quality Assessment

1 code implementation • 6 Aug 2023 • Chaofeng Chen, Jiadi Mo, Jingwen Hou, HaoNing Wu, Liang Liao, Wenxiu Sun, Qiong Yan, Weisi Lin

Our approach to IQA involves the design of a heuristic coarse-to-fine network (CFANet) that leverages multi-scale features and progressively propagates multi-level semantic information to low-level representations in a top-down manner.

Ranked #11 on

Video Quality Assessment

on MSU SR-QA Dataset

Ranked #11 on

Video Quality Assessment

on MSU SR-QA Dataset

Regression-free Blind Image Quality Assessment with Content-Distortion Consistency

1 code implementation • 18 Jul 2023 • Xiaoqi Wang, Jian Xiong, Hao Gao, Weisi Lin

Finally, quality prediction is obtained by aggregating the subjective scores of the retrieved instances.

Advancing Zero-Shot Digital Human Quality Assessment through Text-Prompted Evaluation

1 code implementation • 6 Jul 2023 • ZiCheng Zhang, Wei Sun, Yingjie Zhou, HaoNing Wu, Chunyi Li, Xiongkuo Min, Xiaohong Liu, Guangtao Zhai, Weisi Lin

To address this gap, we propose SJTU-H3D, a subjective quality assessment database specifically designed for full-body digital humans.

You Can Mask More For Extremely Low-Bitrate Image Compression

1 code implementation • 27 Jun 2023 • Anqi Li, Feng Li, Jiaxin Han, Huihui Bai, Runmin Cong, Chunjie Zhang, Meng Wang, Weisi Lin, Yao Zhao

Extensive experiments have demonstrated that our approach outperforms recent state-of-the-art methods in R-D performance, visual quality, and downstream applications, at very low bitrates.

GMS-3DQA: Projection-based Grid Mini-patch Sampling for 3D Model Quality Assessment

1 code implementation • 9 Jun 2023 • ZiCheng Zhang, Wei Sun, Houning Wu, Yingjie Zhou, Chunyi Li, Xiongkuo Min, Guangtao Zhai, Weisi Lin

Model-based 3DQA methods extract features directly from the 3D models, which are characterized by their high degree of complexity.

AGIQA-3K: An Open Database for AI-Generated Image Quality Assessment

1 code implementation • 7 Jun 2023 • Chunyi Li, ZiCheng Zhang, HaoNing Wu, Wei Sun, Xiongkuo Min, Xiaohong Liu, Guangtao Zhai, Weisi Lin

With the rapid advancements of the text-to-image generative model, AI-generated images (AGIs) have been widely applied to entertainment, education, social media, etc.

Towards Explainable In-the-Wild Video Quality Assessment: A Database and a Language-Prompted Approach

1 code implementation • 22 May 2023 • HaoNing Wu, Erli Zhang, Liang Liao, Chaofeng Chen, Jingwen Hou, Annan Wang, Wenxiu Sun, Qiong Yan, Weisi Lin

Though subjective studies have collected overall quality scores for these videos, how the abstract quality scores relate with specific factors is still obscure, hindering VQA methods from more concrete quality evaluations (e. g. sharpness of a video).

GCFAgg: Global and Cross-view Feature Aggregation for Multi-view Clustering

1 code implementation • CVPR 2023 • Weiqing Yan, Yuanyang Zhang, Chenlei Lv, Chang Tang, Guanghui Yue, Liang Liao, Weisi Lin

However, most existing deep clustering methods learn consensus representation or view-specific representations from multiple views via view-wise aggregation way, where they ignore structure relationship of all samples.

Towards Robust Text-Prompted Semantic Criterion for In-the-Wild Video Quality Assessment

2 code implementations • 28 Apr 2023 • HaoNing Wu, Liang Liao, Annan Wang, Chaofeng Chen, Jingwen Hou, Wenxiu Sun, Qiong Yan, Weisi Lin

The proliferation of videos collected during in-the-wild natural settings has pushed the development of effective Video Quality Assessment (VQA) methodologies.

Perceptual Quality Assessment of Face Video Compression: A Benchmark and An Effective Method

1 code implementation • 14 Apr 2023 • Yixuan Li, Bolin Chen, Baoliang Chen, Meng Wang, Shiqi Wang, Weisi Lin

In this paper, we introduce the large-scale Compressed Face Video Quality Assessment (CFVQA) database, which is the first attempt to systematically understand the perceptual quality and diversified compression distortions in face videos.

Blind Multimodal Quality Assessment of Low-light Images

no code implementations • 18 Mar 2023 • Miaohui Wang, Zhuowei Xu, Mai Xu, Weisi Lin

Qualitative and quantitative results on Dark-4K show that BMQA achieves superior performance to existing BIQA approaches as long as a pre-trained model is provided to generate text description.

The First Comprehensive Dataset with Multiple Distortion Types for Visual Just-Noticeable Differences

no code implementations • 5 Mar 2023 • Yaxuan Liu, Jian Jin, Yuan Xue, Weisi Lin

To benefit JND modeling, this work establishes a generalized JND dataset with a coarse-to-fine JND selection, which contains 106 source images and 1, 642 JND maps, covering 25 distortion types.

MetaGrad: Adaptive Gradient Quantization with Hypernetworks

no code implementations • 4 Mar 2023 • Kaixin Xu, Alina Hui Xiu Lee, Ziyuan Zhao, Zhe Wang, Min Wu, Weisi Lin

A popular track of network compression approach is Quantization aware Training (QAT), which accelerates the forward pass during the neural network training and inference.

Exploring Opinion-unaware Video Quality Assessment with Semantic Affinity Criterion

2 code implementations • 26 Feb 2023 • HaoNing Wu, Liang Liao, Jingwen Hou, Chaofeng Chen, Erli Zhang, Annan Wang, Wenxiu Sun, Qiong Yan, Weisi Lin

Recent learning-based video quality assessment (VQA) algorithms are expensive to implement due to the cost of data collection of human quality opinions, and are less robust across various scenarios due to the biases of these opinions.

JND-Based Perceptual Optimization For Learned Image Compression

no code implementations • 25 Feb 2023 • Feng Ding, Jian Jin, Lili Meng, Weisi Lin

After combining them together, we can better assign the distortion in the compressed image with the guidance of JND to preserve the high perceptual quality.

Evaluating the Efficacy of Skincare Product: A Realistic Short-Term Facial Pore Simulation

no code implementations • 23 Feb 2023 • Ling Li, Bandara Dissanayake, Tatsuya Omotezako, Yunjie Zhong, Qing Zhang, Rizhao Cai, Qian Zheng, Dennis Sng, Weisi Lin, YuFei Wang, Alex C Kot

In this paper, we propose the first simulation model to reveal facial pore changes after using skincare products.

Lightweight Salient Object Detection in Optical Remote-Sensing Images via Semantic Matching and Edge Alignment

1 code implementation • 7 Jan 2023 • Gongyang Li, Zhi Liu, Xinpeng Zhang, Weisi Lin

Then, semantic kernels are used to activate salient object locations in two groups of high-level features through dynamic convolution operations in DSMM.

Learning Detail-Structure Alternative Optimization for Blind Super-Resolution

1 code implementation • 3 Dec 2022 • Feng Li, Yixuan Wu, Huihui Bai, Weisi Lin, Runmin Cong, Yao Zhao

Recent blind SR methods suggest to reconstruct SR images relying on blur kernel estimation.

Bridging Component Learning with Degradation Modelling for Blind Image Super-Resolution

1 code implementation • 3 Dec 2022 • Yixuan Wu, Feng Li, Huihui Bai, Weisi Lin, Runmin Cong, Yao Zhao

In this paper, we analyze the degradation of a high-resolution (HR) image from image intrinsic components according to a degradation-based formulation model.

IntegratedPIFu: Integrated Pixel Aligned Implicit Function for Single-view Human Reconstruction

1 code implementation • 15 Nov 2022 • Kennard Yanting Chan, Guosheng Lin, Haiyu Zhao, Weisi Lin

We propose IntegratedPIFu, a new pixel aligned implicit model that builds on the foundation set by PIFuHD.

DeepDC: Deep Distance Correlation as a Perceptual Image Quality Evaluator

1 code implementation • 9 Nov 2022 • Hanwei Zhu, Baoliang Chen, Lingyu Zhu, Shiqi Wang, Weisi Lin

ImageNet pre-trained deep neural networks (DNNs) show notable transferability for building effective image quality assessment (IQA) models.

Exploring Video Quality Assessment on User Generated Contents from Aesthetic and Technical Perspectives

3 code implementations • ICCV 2023 • HaoNing Wu, Erli Zhang, Liang Liao, Chaofeng Chen, Jingwen Hou, Annan Wang, Wenxiu Sun, Qiong Yan, Weisi Lin

In light of this, we propose the Disentangled Objective Video Quality Evaluator (DOVER) to learn the quality of UGC videos based on the two perspectives.

Ranked #1 on

Video Quality Assessment

on LIVE-VQC

Ranked #1 on

Video Quality Assessment

on LIVE-VQC

KSS-ICP: Point Cloud Registration based on Kendall Shape Space

1 code implementation • 5 Nov 2022 • Chenlei Lv, Weisi Lin, Baoquan Zhao

The point cloud representation in KSS is invariant to similarity transformations.

Neighbourhood Representative Sampling for Efficient End-to-end Video Quality Assessment

4 code implementations • 11 Oct 2022 • HaoNing Wu, Chaofeng Chen, Liang Liao, Jingwen Hou, Wenxiu Sun, Qiong Yan, Jinwei Gu, Weisi Lin

On the other hand, existing practices, such as resizing and cropping, will change the quality of original videos due to the loss of details and contents, and are therefore harmful to quality assessment.

Ranked #2 on

Video Quality Assessment

on KoNViD-1k

(using extra training data)

Ranked #2 on

Video Quality Assessment

on KoNViD-1k

(using extra training data)

Blind Quality Assessment of 3D Dense Point Clouds with Structure Guided Resampling

no code implementations • 31 Aug 2022 • Wei Zhou, Qi Yang, Qiuping Jiang, Guangtao Zhai, Weisi Lin

Objective quality assessment of 3D point clouds is essential for the development of immersive multimedia systems in real-world applications.

HVS-Inspired Signal Degradation Network for Just Noticeable Difference Estimation

1 code implementation • 16 Aug 2022 • Jian Jin, Yuan Xue, Xingxing Zhang, Lili Meng, Yao Zhao, Weisi Lin

However, they have a major drawback that the generated JND is assessed in the real-world signal domain instead of in the perceptual domain in the human brain.

Exploring the Effectiveness of Video Perceptual Representation in Blind Video Quality Assessment

1 code implementation • 8 Jul 2022 • Liang Liao, Kangmin Xu, HaoNing Wu, Chaofeng Chen, Wenxiu Sun, Qiong Yan, Weisi Lin

Experiments show that the perceptual representation in the HVS is an effective way of predicting subjective temporal quality, and thus TPQI can, for the first time, achieve comparable performance to the spatial quality metric and be even more effective in assessing videos with large temporal variations.

FAST-VQA: Efficient End-to-end Video Quality Assessment with Fragment Sampling

4 code implementations • 6 Jul 2022 • HaoNing Wu, Chaofeng Chen, Jingwen Hou, Liang Liao, Annan Wang, Wenxiu Sun, Qiong Yan, Weisi Lin

Consisting of fragments and FANet, the proposed FrAgment Sample Transformer for VQA (FAST-VQA) enables efficient end-to-end deep VQA and learns effective video-quality-related representations.

Ranked #3 on

Video Quality Assessment

on LIVE-VQC

(using extra training data)

Ranked #3 on

Video Quality Assessment

on LIVE-VQC

(using extra training data)

DisCoVQA: Temporal Distortion-Content Transformers for Video Quality Assessment

1 code implementation • 20 Jun 2022 • HaoNing Wu, Chaofeng Chen, Liang Liao, Jingwen Hou, Wenxiu Sun, Qiong Yan, Weisi Lin

Based on prominent time-series modeling ability of transformers, we propose a novel and effective transformer-based VQA method to tackle these two issues.

Ranked #5 on

Video Quality Assessment

on KoNViD-1k

Ranked #5 on

Video Quality Assessment

on KoNViD-1k

Minimum Noticeable Difference based Adversarial Privacy Preserving Image Generation

no code implementations • 17 Jun 2022 • Wen Sun, Jian Jin, Weisi Lin

To achieve this, an adversarial loss is firstly proposed to make the deep learning models attacked by the adversarial images successfully.

Distilling Knowledge from Object Classification to Aesthetics Assessment

no code implementations • 2 Jun 2022 • Jingwen Hou, Henghui Ding, Weisi Lin, Weide Liu, Yuming Fang

To deal with this dilemma, we propose to distill knowledge on semantic patterns for a vast variety of image contents from multiple pre-trained object classification (POC) models to an IAA model.

SelfReformer: Self-Refined Network with Transformer for Salient Object Detection

1 code implementation • 23 May 2022 • Yi Ke Yun, Weisi Lin

The global and local contexts significantly contribute to the integrity of predictions in Salient Object Detection (SOD).

Ranked #1 on

Salient Object Detection

on ECSSD

Ranked #1 on

Salient Object Detection

on ECSSD

Automatic Facial Skin Feature Detection for Everyone

no code implementations • 30 Mar 2022 • Qian Zheng, Ankur Purwar, Heng Zhao, Guang Liang Lim, Ling Li, Debasish Behera, Qian Wang, Min Tan, Rizhao Cai, Jennifer Werner, Dennis Sng, Maurice van Steensel, Weisi Lin, Alex C Kot

We present an automatic facial skin feature detection method that works across a variety of skin tones and age groups for selfies in the wild.

Adjacent Context Coordination Network for Salient Object Detection in Optical Remote Sensing Images

1 code implementation • 25 Mar 2022 • Gongyang Li, Zhi Liu, Dan Zeng, Weisi Lin, Haibin Ling

As the key component of ACCoNet, ACCoM activates the salient regions of output features of the encoder and transmits them to the decoder.

Full RGB Just Noticeable Difference (JND) Modelling

no code implementations • 1 Mar 2022 • Jian Jin, Dong Yu, Weisi Lin, Lili Meng, Hao Wang, Huaxiang Zhang

Besides, the JND of the red and blue channels are larger than that of the green one according to the experimental results of the proposed model, which demonstrates that more changes can be tolerated in the red and blue channels, in line with the well-known fact that the human visual system is more sensitive to the green channel in comparison with the red and blue ones.

Lightweight Salient Object Detection in Optical Remote Sensing Images via Feature Correlation

1 code implementation • 20 Jan 2022 • Gongyang Li, Zhi Liu, Zhen Bai, Weisi Lin, and Haibin Ling

Then, following the coarse-to-fine strategy, we generate an initial coarse saliency map from high-level semantic features in a Correlation Module (CorrM).

Auto-Weighted Layer Representation Based View Synthesis Distortion Estimation for 3-D Video Coding

no code implementations • 7 Jan 2022 • Jian Jin, Xingxing Zhang, Lili Meng, Weisi Lin, Jie Liang, Huaxiang Zhang, Yao Zhao

Experimental results show that the VSD can be accurately estimated with the weights learnt by the nonlinear mapping function once its associated S-VSDs are available.

A New Image Codec Paradigm for Human and Machine Uses

no code implementations • 19 Dec 2021 • Sien Chen, Jian Jin, Lili Meng, Weisi Lin, Zhuo Chen, Tsui-Shan Chang, Zhengguang Li, Huaxiang Zhang

Meanwhile, an image predictor is designed and trained to achieve the general-quality image reconstruction with the 16-bit gray-scale profile and signal features.

Multi-Content Complementation Network for Salient Object Detection in Optical Remote Sensing Images

1 code implementation • 2 Dec 2021 • Gongyang Li, Zhi Liu, Weisi Lin, Haibin Ling

In this paper, we propose a novel Multi-Content Complementation Network (MCCNet) to explore the complementarity of multiple content for RSI-SOD.

Towards Top-Down Just Noticeable Difference Estimation of Natural Images

1 code implementation • 11 Aug 2021 • Qiuping Jiang, Zhentao Liu, Shiqi Wang, Feng Shao, Weisi Lin

Instead of explicitly formulating and fusing different masking effects in a bottom-up way, the proposed JND estimation model dedicates to first predicting a critical perceptual lossless (CPL) counterpart of the original image and then calculating the difference map between the original image and the predicted CPL image as the JND map.

Low Resolution Information Also Matters: Learning Multi-Resolution Representations for Person Re-Identification

no code implementations • 26 May 2021 • Guoqing Zhang, Yuhao Chen, Weisi Lin, Arun Chandran, Xuan Jing

As a prevailing task in video surveillance and forensics field, person re-identification (re-ID) aims to match person images captured from non-overlapped cameras.

CMUA-Watermark: A Cross-Model Universal Adversarial Watermark for Combating Deepfakes

1 code implementation • 23 May 2021 • Hao Huang, Yongtao Wang, Zhaoyu Chen, Yuze Zhang, Yuheng Li, Zhi Tang, Wei Chu, Jingdong Chen, Weisi Lin, Kai-Kuang Ma

Then, we design a two-level perturbation fusion strategy to alleviate the conflict between the adversarial watermarks generated by different facial images and models.

Voxel Structure-based Mesh Reconstruction from a 3D Point Cloud

1 code implementation • 21 Apr 2021 • Chenlei Lv, Weisi Lin, Baoquan Zhao

Mesh reconstruction from a 3D point cloud is an important topic in the fields of computer graphic, computer vision, and multimedia analysis.

Advanced Geometry Surface Coding for Dynamic Point Cloud Compression

no code implementations • 11 Mar 2021 • Jian Xiong, Hao Gao, Miaohui Wang, Hongliang Li, King Ngi Ngan, Weisi Lin

In video-based dynamic point cloud compression (V-PCC), 3D point clouds are projected onto 2D images for compressing with the existing video codecs.

Just Noticeable Difference for Deep Machine Vision

no code implementations • 16 Feb 2021 • Jian Jin, Xingxing Zhang, Xin Fu, huan zhang, Weisi Lin, Jian Lou, Yao Zhao

Experimental results on image classification demonstrate that we successfully find the JND for deep machine vision.

Collaborative Intelligence: Challenges and Opportunities

no code implementations • 13 Feb 2021 • Ivan V. Bajić, Weisi Lin, Yonghong Tian

This paper presents an overview of the emerging area of collaborative intelligence (CI).

Progressive Self-Guided Loss for Salient Object Detection

1 code implementation • 7 Jan 2021 • Sheng Yang, Weisi Lin, Guosheng Lin, Qiuping Jiang, Zichuan Liu

We present a simple yet effective progressive self-guided loss function to facilitate deep learning-based salient object detection (SOD) in images.

Adversarial Exposure Attack on Diabetic Retinopathy Imagery

no code implementations • 19 Sep 2020 • Yupeng Cheng, Felix Juefei-Xu, Qing Guo, Huazhu Fu, Xiaofei Xie, Shang-Wei Lin, Weisi Lin, Yang Liu

In this paper, we study this problem from the viewpoint of adversarial attack and identify a totally new task, i. e., adversarial exposure attack generating adversarial images by tuning image exposure to mislead the DNNs with significantly high transferability.

Pasadena: Perceptually Aware and Stealthy Adversarial Denoise Attack

no code implementations • 14 Jul 2020 • Yupeng Cheng, Qing Guo, Felix Juefei-Xu, Wei Feng, Shang-Wei Lin, Weisi Lin, Yang Liu

To this end, we initiate the very first attempt to study this problem from the perspective of adversarial attack and propose the adversarial denoise attack.

GSTO: Gated Scale-Transfer Operation for Multi-Scale Feature Learning in Pixel Labeling

1 code implementation • 27 May 2020 • Zhuoying Wang, Yongtao Wang, Zhi Tang, Yangyan Li, Ying Chen, Haibin Ling, Weisi Lin

Existing CNN-based methods for pixel labeling heavily depend on multi-scale features to meet the requirements of both semantic comprehension and detail preservation.

Cascaded Parallel Filtering for Memory-Efficient Image-Based Localization

1 code implementation • ICCV 2019 • Wentao Cheng, Weisi Lin, Kan Chen, Xinfeng Zhang

Image-based localization (IBL) aims to estimate the 6DOF camera pose for a given query image.

Learning a No-Reference Quality Assessment Model of Enhanced Images With Big Data

no code implementations • 18 Apr 2019 • Ke Gu, DaCheng Tao, Junfei Qiao, Weisi Lin

Given an image, our quality measure first extracts 17 features through analysis of contrast, sharpness, brightness and more, and then yields a measre of visual quality using a regression module, which is learned with big-data training samples that are much bigger than the size of relevant image datasets.

A Dilated Inception Network for Visual Saliency Prediction

1 code implementation • 7 Apr 2019 • Sheng Yang, Guosheng Lin, Qiuping Jiang, Weisi Lin

In this work, we proposed an end-to-end dilated inception network (DINet) for visual saliency prediction.

Towards Robust Curve Text Detection with Conditional Spatial Expansion

no code implementations • CVPR 2019 • Zichuan Liu, Guosheng Lin, Sheng Yang, Fayao Liu, Weisi Lin, Wang Ling Goh

It is challenging to detect curve texts due to their irregular shapes and varying sizes.

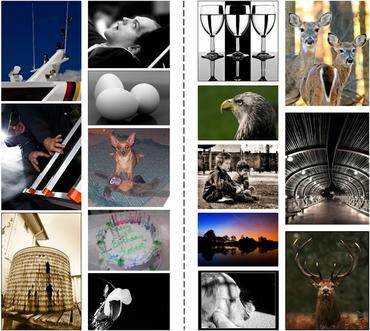

Which Has Better Visual Quality: The Clear Blue Sky or a Blurry Animal?

1 code implementation • IEEE Transactions on Multimedia 2018 • Dingquan Li, Tingting Jiang, Weisi Lin, Ming Jiang

The proposed method, SFA, is compared with nine representative blur-specific NR-IQA methods, two general-purpose NR-IQA methods, and two extra full-reference IQA methods on Gaussian blur images (with and without Gaussian noise/JPEG compression) and realistic blur images from multiple databases, including LIVE, TID2008, TID2013, MLIVE1, MLIVE2, BID, and CLIVE.

Intermediate Deep Feature Compression: the Next Battlefield of Intelligent Sensing

no code implementations • 17 Sep 2018 • Zhuo Chen, Weisi Lin, Shiqi Wang, Ling-Yu Duan, Alex C. Kot

The recent advances of hardware technology have made the intelligent analysis equipped at the front-end with deep learning more prevailing and practical.

Robustness Analysis of Pedestrian Detectors for Surveillance

1 code implementation • 12 Jul 2018 • Yuming Fang, Guanqun Ding, Yuan Yuan, Weisi Lin, Haiwen Liu

In this study, we conduct the research on the robustness of pedestrian detection algorithms to video quality degradation.

Learning Markov Clustering Networks for Scene Text Detection

no code implementations • CVPR 2018 • Zichuan Liu, Guosheng Lin, Sheng Yang, Jiashi Feng, Weisi Lin, Wang Ling Goh

MCN predicts instance-level bounding boxes by firstly converting an image into a Stochastic Flow Graph (SFG) and then performing Markov Clustering on this graph.

Review of Visual Saliency Detection with Comprehensive Information

no code implementations • 9 Mar 2018 • Runmin Cong, Jianjun Lei, Huazhu Fu, Ming-Ming Cheng, Weisi Lin, Qingming Huang

With the acquisition technology development, more comprehensive information, such as depth cue, inter-image correspondence, or temporal relationship, is available to extend image saliency detection to RGBD saliency detection, co-saliency detection, or video saliency detection.

An Iterative Co-Saliency Framework for RGBD Images

no code implementations • 4 Nov 2017 • Runmin Cong, Jianjun Lei, Huazhu Fu, Weisi Lin, Qingming Huang, Xiaochun Cao, Chunping Hou

In this paper, we propose an iterative RGBD co-saliency framework, which utilizes the existing single saliency maps as the initialization, and generates the final RGBD cosaliency map by using a refinement-cycle model.

Image Quality Assessment Guided Deep Neural Networks Training

3 code implementations • 13 Aug 2017 • Zhuo Chen, Weisi Lin, Shiqi Wang, Long Xu, Leida Li

For many computer vision problems, the deep neural networks are trained and validated based on the assumption that the input images are pristine (i. e., artifact-free).