Search Results for author: Xiaodan Liang

Found 269 papers, 121 papers with code

Don’t Take It Literally: An Edit-Invariant Sequence Loss for Text Generation

1 code implementation • NAACL 2022 • Guangyi Liu, Zichao Yang, Tianhua Tao, Xiaodan Liang, Junwei Bao, Zhen Li, Xiaodong He, Shuguang Cui, Zhiting Hu

Such training objective is sub-optimal when the target sequence is not perfect, e. g., when the target sequence is corrupted with noises, or when only weak sequence supervision is available.

MMTryon: Multi-Modal Multi-Reference Control for High-Quality Fashion Generation

no code implementations • 1 May 2024 • Xujie Zhang, Ente Lin, Xiu Li, Yuxuan Luo, Michael Kampffmeyer, Xin Dong, Xiaodan Liang

Besides, to remove the segmentation dependency, MMTryon uses a parsing-free garment encoder and leverages a novel scalable data generation pipeline to convert existing VITON datasets to a form that allows MMTryon to be trained without requiring any explicit segmentation.

TheaterGen: Character Management with LLM for Consistent Multi-turn Image Generation

1 code implementation • 29 Apr 2024 • Junhao Cheng, Baiqiao Yin, Kaixin Cai, Minbin Huang, Hanhui Li, Yuxin He, Xi Lu, Yue Li, Yifei Li, Yuhao Cheng, Yiqiang Yan, Xiaodan Liang

To address this issue, we introduce TheaterGen, a training-free framework that integrates large language models (LLMs) and text-to-image (T2I) models to provide the capability of multi-turn image generation.

ConsistentID: Portrait Generation with Multimodal Fine-Grained Identity Preserving

1 code implementation • 25 Apr 2024 • Jiehui Huang, Xiao Dong, Wenhui Song, Hanhui Li, Jun Zhou, Yuhao Cheng, Shutao Liao, Long Chen, Yiqiang Yan, Shengcai Liao, Xiaodan Liang

ConsistentID comprises two key components: a multimodal facial prompt generator that combines facial features, corresponding facial descriptions and the overall facial context to enhance precision in facial details, and an ID-preservation network optimized through the facial attention localization strategy, aimed at preserving ID consistency in facial regions.

DetCLIPv3: Towards Versatile Generative Open-vocabulary Object Detection

no code implementations • 14 Apr 2024 • Lewei Yao, Renjie Pi, Jianhua Han, Xiaodan Liang, Hang Xu, Wei zhang, Zhenguo Li, Dan Xu

This is followed by a fine-tuning stage that leverages a small number of high-resolution samples to further enhance detection performance.

MLP Can Be A Good Transformer Learner

1 code implementation • 8 Apr 2024 • Sihao Lin, Pumeng Lyu, Dongrui Liu, Tao Tang, Xiaodan Liang, Andy Song, Xiaojun Chang

We identify that regarding the attention layer in bottom blocks, their subsequent MLP layers, i. e. two feed-forward layers, can elicit the same entropy quantity.

LayerDiff: Exploring Text-guided Multi-layered Composable Image Synthesis via Layer-Collaborative Diffusion Model

no code implementations • 18 Mar 2024 • Runhui Huang, Kaixin Cai, Jianhua Han, Xiaodan Liang, Renjing Pei, Guansong Lu, Songcen Xu, Wei zhang, Hang Xu

Specifically, an inter-layer attention module is designed to encourage information exchange and learning between layers, while a text-guided intra-layer attention module incorporates layer-specific prompts to direct the specific-content generation for each layer.

DialogGen: Multi-modal Interactive Dialogue System for Multi-turn Text-to-Image Generation

1 code implementation • 13 Mar 2024 • Minbin Huang, Yanxin Long, Xinchi Deng, Ruihang Chu, Jiangfeng Xiong, Xiaodan Liang, Hong Cheng, Qinglin Lu, Wei Liu

However, many of these works face challenges in identifying correct output modalities and generating coherent images accordingly as the number of output modalities increases and the conversations go deeper.

Language-Driven Visual Consensus for Zero-Shot Semantic Segmentation

no code implementations • 13 Mar 2024 • ZiCheng Zhang, Tong Zhang, Yi Zhu, Jianzhuang Liu, Xiaodan Liang, Qixiang Ye, Wei Ke

To mitigate these issues, we propose a Language-Driven Visual Consensus (LDVC) approach, fostering improved alignment of semantic and visual information. Specifically, we leverage class embeddings as anchors due to their discrete and abstract nature, steering vision features toward class embeddings.

NavCoT: Boosting LLM-Based Vision-and-Language Navigation via Learning Disentangled Reasoning

1 code implementation • 12 Mar 2024 • Bingqian Lin, Yunshuang Nie, Ziming Wei, Jiaqi Chen, Shikui Ma, Jianhua Han, Hang Xu, Xiaojun Chang, Xiaodan Liang

Vision-and-Language Navigation (VLN), as a crucial research problem of Embodied AI, requires an embodied agent to navigate through complex 3D environments following natural language instructions.

Towards Deviation-Robust Agent Navigation via Perturbation-Aware Contrastive Learning

no code implementations • 9 Mar 2024 • Bingqian Lin, Yanxin Long, Yi Zhu, Fengda Zhu, Xiaodan Liang, Qixiang Ye, Liang Lin

For encouraging the agent to well capture the difference brought by perturbation, a perturbation-aware contrastive learning mechanism is further developed by contrasting perturbation-free trajectory encodings and perturbation-based counterparts.

DNA Family: Boosting Weight-Sharing NAS with Block-Wise Supervisions

1 code implementation • 2 Mar 2024 • Guangrun Wang, Changlin Li, Liuchun Yuan, Jiefeng Peng, Xiaoyu Xian, Xiaodan Liang, Xiaojun Chang, Liang Lin

Addressing this problem, we modularize a large search space into blocks with small search spaces and develop a family of models with the distilling neural architecture (DNA) techniques.

AlignMiF: Geometry-Aligned Multimodal Implicit Field for LiDAR-Camera Joint Synthesis

1 code implementation • 27 Feb 2024 • Tao Tang, Guangrun Wang, Yixing Lao, Peng Chen, Jie Liu, Liang Lin, Kaicheng Yu, Xiaodan Liang

Through extensive experiments across various datasets and scenes, we demonstrate the effectiveness of our approach in facilitating better interaction between LiDAR and camera modalities within a unified neural field.

MUSTARD: Mastering Uniform Synthesis of Theorem and Proof Data

1 code implementation • 14 Feb 2024 • Yinya Huang, Xiaohan Lin, Zhengying Liu, Qingxing Cao, Huajian Xin, Haiming Wang, Zhenguo Li, Linqi Song, Xiaodan Liang

Recent large language models (LLMs) have witnessed significant advancement in various tasks, including mathematical reasoning and theorem proving.

GS-CLIP: Gaussian Splatting for Contrastive Language-Image-3D Pretraining from Real-World Data

no code implementations • 9 Feb 2024 • Haoyuan Li, Yanpeng Zhou, Yihan Zeng, Hang Xu, Xiaodan Liang

3D Shape represented as point cloud has achieve advancements in multimodal pre-training to align image and language descriptions, which is curial to object identification, classification, and retrieval.

MapGPT: Map-Guided Prompting with Adaptive Path Planning for Vision-and-Language Navigation

no code implementations • 14 Jan 2024 • Jiaqi Chen, Bingqian Lin, ran Xu, Zhenhua Chai, Xiaodan Liang, Kwan-Yee K. Wong

Embodied agents equipped with GPT as their brain have exhibited extraordinary decision-making and generalization abilities across various tasks.

3D Visibility-aware Generalizable Neural Radiance Fields for Interacting Hands

1 code implementation • 2 Jan 2024 • Xuan Huang, Hanhui Li, Zejun Yang, Zhisheng Wang, Xiaodan Liang

Subsequently, a feature fusion module that exploits the visibility of query points and mesh vertices is introduced to adaptively merge features of both hands, enabling the recovery of features in unseen areas.

Holistic Autonomous Driving Understanding by Bird's-Eye-View Injected Multi-Modal Large Models

1 code implementation • 2 Jan 2024 • Xinpeng Ding, Jinahua Han, Hang Xu, Xiaodan Liang, Wei zhang, Xiaomeng Li

BEV-InMLLM integrates multi-view, spatial awareness, and temporal semantics to enhance MLLMs' capabilities on NuInstruct tasks.

Monocular 3D Hand Mesh Recovery via Dual Noise Estimation

1 code implementation • 26 Dec 2023 • Hanhui Li, Xiaojian Lin, Xuan Huang, Zejun Yang, Zhisheng Wang, Xiaodan Liang

However, due to the fixed hand topology and complex hand poses, current models are hard to generate meshes that are aligned with the image well.

Towards Detailed Text-to-Motion Synthesis via Basic-to-Advanced Hierarchical Diffusion Model

no code implementations • 18 Dec 2023 • Zhenyu Xie, Yang Wu, Xuehao Gao, Zhongqian Sun, Wei Yang, Xiaodan Liang

Besides, we introduce a multi-denoiser framework for the advanced diffusion model to ease the learning of high-dimensional model and fully explore the generative potential of the diffusion model.

WarpDiffusion: Efficient Diffusion Model for High-Fidelity Virtual Try-on

no code implementations • 6 Dec 2023 • Xujie Zhang, Xiu Li, Michael Kampffmeyer, Xin Dong, Zhenyu Xie, Feida Zhu, Haoye Dong, Xiaodan Liang

Image-based Virtual Try-On (VITON) aims to transfer an in-shop garment image onto a target person.

DreamVideo: High-Fidelity Image-to-Video Generation with Image Retention and Text Guidance

no code implementations • 5 Dec 2023 • Cong Wang, Jiaxi Gu, Panwen Hu, Songcen Xu, Hang Xu, Xiaodan Liang

Especially for fidelity, our model has a powerful image retention ability and delivers the best results in UCF101 compared to other image-to-video models to our best knowledge.

CLOMO: Counterfactual Logical Modification with Large Language Models

no code implementations • 29 Nov 2023 • Yinya Huang, Ruixin Hong, Hongming Zhang, Wei Shao, Zhicheng Yang, Dong Yu, ChangShui Zhang, Xiaodan Liang, Linqi Song

In this study, we delve into the realm of counterfactual reasoning capabilities of large language models (LLMs).

Speak Like a Native: Prompting Large Language Models in a Native Style

1 code implementation • 22 Nov 2023 • Zhicheng Yang, Yiwei Wang, Yinya Huang, Jing Xiong, Xiaodan Liang, Jing Tang

Specifically, with AlignedCoT, we observe an average +3. 2\% improvement for \texttt{gpt-3. 5-turbo} compared to the carefully handcrafted CoT on multi-step reasoning benchmarks. Furthermore, we use AlignedCoT to rewrite the CoT text style in the training set, which improves the performance of Retrieval Augmented Generation by 3. 6\%. The source code and dataset is available at https://github. com/yangzhch6/AlignedCoT

TRIGO: Benchmarking Formal Mathematical Proof Reduction for Generative Language Models

1 code implementation • 16 Oct 2023 • Jing Xiong, Jianhao Shen, Ye Yuan, Haiming Wang, Yichun Yin, Zhengying Liu, Lin Li, Zhijiang Guo, Qingxing Cao, Yinya Huang, Chuanyang Zheng, Xiaodan Liang, Ming Zhang, Qun Liu

Automated theorem proving (ATP) has become an appealing domain for exploring the reasoning ability of the recent successful generative language models.

DQ-LoRe: Dual Queries with Low Rank Approximation Re-ranking for In-Context Learning

1 code implementation • 4 Oct 2023 • Jing Xiong, Zixuan Li, Chuanyang Zheng, Zhijiang Guo, Yichun Yin, Enze Xie, Zhicheng Yang, Qingxing Cao, Haiming Wang, Xiongwei Han, Jing Tang, Chengming Li, Xiaodan Liang

Dual Queries first query LLM to obtain LLM-generated knowledge such as CoT, then query the retriever to obtain the final exemplars via both question and the knowledge.

LEGO-Prover: Neural Theorem Proving with Growing Libraries

1 code implementation • 1 Oct 2023 • Haiming Wang, Huajian Xin, Chuanyang Zheng, Lin Li, Zhengying Liu, Qingxing Cao, Yinya Huang, Jing Xiong, Han Shi, Enze Xie, Jian Yin, Zhenguo Li, Heng Liao, Xiaodan Liang

Our ablation study indicates that these newly added skills are indeed helpful for proving theorems, resulting in an improvement from a success rate of 47. 1% to 50. 4%.

Ranked #1 on

Automated Theorem Proving

on miniF2F-test

(Pass@100 metric)

Ranked #1 on

Automated Theorem Proving

on miniF2F-test

(Pass@100 metric)

Towards High-Fidelity Text-Guided 3D Face Generation and Manipulation Using only Images

no code implementations • ICCV 2023 • Cuican Yu, Guansong Lu, Yihan Zeng, Jian Sun, Xiaodan Liang, Huibin Li, Zongben Xu, Songcen Xu, Wei zhang, Hang Xu

In this paper, we propose a text-guided 3D faces generation method, refer as TG-3DFace, for generating realistic 3D faces using text guidance.

DiffCloth: Diffusion Based Garment Synthesis and Manipulation via Structural Cross-modal Semantic Alignment

no code implementations • ICCV 2023 • Xujie Zhang, BinBin Yang, Michael C. Kampffmeyer, Wenqing Zhang, Shiyue Zhang, Guansong Lu, Liang Lin, Hang Xu, Xiaodan Liang

Cross-modal garment synthesis and manipulation will significantly benefit the way fashion designers generate garments and modify their designs via flexible linguistic interfaces. Current approaches follow the general text-to-image paradigm and mine cross-modal relations via simple cross-attention modules, neglecting the structural correspondence between visual and textual representations in the fashion design domain.

GrowCLIP: Data-aware Automatic Model Growing for Large-scale Contrastive Language-Image Pre-training

no code implementations • ICCV 2023 • Xinchi Deng, Han Shi, Runhui Huang, Changlin Li, Hang Xu, Jianhua Han, James Kwok, Shen Zhao, Wei zhang, Xiaodan Liang

Compared with the existing methods, GrowCLIP improves 2. 3% average top-1 accuracy on zero-shot image classification of 9 downstream tasks.

Coordinate Transformer: Achieving Single-stage Multi-person Mesh Recovery from Videos

no code implementations • ICCV 2023 • Haoyuan Li, Haoye Dong, Hanchao Jia, Dong Huang, Michael C. Kampffmeyer, Liang Lin, Xiaodan Liang

Multi-person 3D mesh recovery from videos is a critical first step towards automatic perception of group behavior in virtual reality, physical therapy and beyond.

DiffDis: Empowering Generative Diffusion Model with Cross-Modal Discrimination Capability

no code implementations • ICCV 2023 • Runhui Huang, Jianhua Han, Guansong Lu, Xiaodan Liang, Yihan Zeng, Wei zhang, Hang Xu

DiffDis first formulates the image-text discriminative problem as a generative diffusion process of the text embedding from the text encoder conditioned on the image.

CTP: Towards Vision-Language Continual Pretraining via Compatible Momentum Contrast and Topology Preservation

1 code implementation • 14 Aug 2023 • Hongguang Zhu, Yunchao Wei, Xiaodan Liang, Chunjie Zhang, Yao Zhao

Regarding the growing nature of real-world data, such an offline training paradigm on ever-expanding data is unsustainable, because models lack the continual learning ability to accumulate knowledge constantly.

LAW-Diffusion: Complex Scene Generation by Diffusion with Layouts

no code implementations • ICCV 2023 • BinBin Yang, Yi Luo, Ziliang Chen, Guangrun Wang, Xiaodan Liang, Liang Lin

Thanks to the rapid development of diffusion models, unprecedented progress has been witnessed in image synthesis.

MixReorg: Cross-Modal Mixed Patch Reorganization is a Good Mask Learner for Open-World Semantic Segmentation

no code implementations • ICCV 2023 • Kaixin Cai, Pengzhen Ren, Yi Zhu, Hang Xu, Jianzhuang Liu, Changlin Li, Guangrun Wang, Xiaodan Liang

To address this issue, we propose MixReorg, a novel and straightforward pre-training paradigm for semantic segmentation that enhances a model's ability to reorganize patches mixed across images, exploring both local visual relevance and global semantic coherence.

FULLER: Unified Multi-modality Multi-task 3D Perception via Multi-level Gradient Calibration

no code implementations • ICCV 2023 • Zhijian Huang, Sihao Lin, Guiyu Liu, Mukun Luo, Chaoqiang Ye, Hang Xu, Xiaojun Chang, Xiaodan Liang

Specifically, the gradients, produced by the task heads and used to update the shared backbone, will be calibrated at the backbone's last layer to alleviate the task conflict.

Fashion Matrix: Editing Photos by Just Talking

1 code implementation • 25 Jul 2023 • Zheng Chong, Xujie Zhang, Fuwei Zhao, Zhenyu Xie, Xiaodan Liang

The utilization of Large Language Models (LLMs) for the construction of AI systems has garnered significant attention across diverse fields.

Surfer: Progressive Reasoning with World Models for Robotic Manipulation

no code implementations • 20 Jun 2023 • Pengzhen Ren, Kaidong Zhang, Hetao Zheng, Zixuan Li, Yuhang Wen, Fengda Zhu, Mas Ma, Xiaodan Liang

To conduct a comprehensive and systematic evaluation of the robot manipulation model in terms of language understanding and physical execution, we also created a robotic manipulation benchmark with progressive reasoning tasks, called SeaWave.

CorNav: Autonomous Agent with Self-Corrected Planning for Zero-Shot Vision-and-Language Navigation

no code implementations • 17 Jun 2023 • Xiwen Liang, Liang Ma, Shanshan Guo, Jianhua Han, Hang Xu, Shikui Ma, Xiaodan Liang

Understanding and following natural language instructions while navigating through complex, real-world environments poses a significant challenge for general-purpose robots.

UniDiff: Advancing Vision-Language Models with Generative and Discriminative Learning

no code implementations • 1 Jun 2023 • Xiao Dong, Runhui Huang, XiaoYong Wei, Zequn Jie, Jianxing Yu, Jian Yin, Xiaodan Liang

Recent advances in vision-language pre-training have enabled machines to perform better in multimodal object discrimination (e. g., image-text semantic alignment) and image synthesis (e. g., text-to-image generation).

RealignDiff: Boosting Text-to-Image Diffusion Model with Coarse-to-fine Semantic Re-alignment

1 code implementation • 31 May 2023 • Guian Fang, Zutao Jiang, Jianhua Han, Guansong Lu, Hang Xu, Shengcai Liao, Xiaodan Liang

Recent advances in text-to-image diffusion models have achieved remarkable success in generating high-quality, realistic images from textual descriptions.

Boosting Visual-Language Models by Exploiting Hard Samples

1 code implementation • 9 May 2023 • Haonan Wang, Minbin Huang, Runhui Huang, Lanqing Hong, Hang Xu, Tianyang Hu, Xiaodan Liang, Zhenguo Li, Hong Cheng, Kenji Kawaguchi

In this work, we present HELIP, a cost-effective strategy tailored to enhance the performance of existing CLIP models without the need for training a model from scratch or collecting additional data.

Towards Medical Artificial General Intelligence via Knowledge-Enhanced Multimodal Pretraining

1 code implementation • 26 Apr 2023 • Bingqian Lin, Zicong Chen, Mingjie Li, Haokun Lin, Hang Xu, Yi Zhu, Jianzhuang Liu, Wenjia Cai, Lei Yang, Shen Zhao, Chenfei Wu, Ling Chen, Xiaojun Chang, Yi Yang, Lei Xing, Xiaodan Liang

In MOTOR, we combine two kinds of basic medical knowledge, i. e., general and specific knowledge, in a complementary manner to boost the general pretraining process.

LiDAR-NeRF: Novel LiDAR View Synthesis via Neural Radiance Fields

1 code implementation • 20 Apr 2023 • Tang Tao, Longfei Gao, Guangrun Wang, Yixing Lao, Peng Chen, Hengshuang Zhao, Dayang Hao, Xiaodan Liang, Mathieu Salzmann, Kaicheng Yu

We address this challenge by formulating, to the best of our knowledge, the first differentiable end-to-end LiDAR rendering framework, LiDAR-NeRF, leveraging a neural radiance field (NeRF) to facilitate the joint learning of geometry and the attributes of 3D points.

DetCLIPv2: Scalable Open-Vocabulary Object Detection Pre-training via Word-Region Alignment

no code implementations • CVPR 2023 • Lewei Yao, Jianhua Han, Xiaodan Liang, Dan Xu, Wei zhang, Zhenguo Li, Hang Xu

This paper presents DetCLIPv2, an efficient and scalable training framework that incorporates large-scale image-text pairs to achieve open-vocabulary object detection (OVD).

GP-VTON: Towards General Purpose Virtual Try-on via Collaborative Local-Flow Global-Parsing Learning

1 code implementation • CVPR 2023 • Zhenyu Xie, Zaiyu Huang, Xin Dong, Fuwei Zhao, Haoye Dong, Xijin Zhang, Feida Zhu, Xiaodan Liang

Specifically, compared with the previous global warping mechanism, LFGP employs local flows to warp garments parts individually, and assembles the local warped results via the global garment parsing, resulting in reasonable warped parts and a semantic-correct intact garment even with challenging inputs. On the other hand, our DGT training strategy dynamically truncates the gradient in the overlap area and the warped garment is no more required to meet the boundary constraint, which effectively avoids the texture squeezing problem.

CLIP$^2$: Contrastive Language-Image-Point Pretraining from Real-World Point Cloud Data

no code implementations • 22 Mar 2023 • Yihan Zeng, Chenhan Jiang, Jiageng Mao, Jianhua Han, Chaoqiang Ye, Qingqiu Huang, Dit-yan Yeung, Zhen Yang, Xiaodan Liang, Hang Xu

Contrastive Language-Image Pre-training, benefiting from large-scale unlabeled text-image pairs, has demonstrated great performance in open-world vision understanding tasks.

Ranked #3 on

Zero-shot 3D Point Cloud Classification

on ScanNetV2

Ranked #3 on

Zero-shot 3D Point Cloud Classification

on ScanNetV2

Dynamic Graph Enhanced Contrastive Learning for Chest X-ray Report Generation

1 code implementation • CVPR 2023 • Mingjie Li, Bingqian Lin, Zicong Chen, Haokun Lin, Xiaodan Liang, Xiaojun Chang

To address the limitation, we propose a knowledge graph with Dynamic structure and nodes to facilitate medical report generation with Contrastive Learning, named DCL.

CapDet: Unifying Dense Captioning and Open-World Detection Pretraining

no code implementations • CVPR 2023 • Yanxin Long, Youpeng Wen, Jianhua Han, Hang Xu, Pengzhen Ren, Wei zhang, Shen Zhao, Xiaodan Liang

Besides, our CapDet also achieves state-of-the-art performance on dense captioning tasks, e. g., 15. 44% mAP on VG V1. 2 and 13. 98% on the VG-COCO dataset.

Visual Exemplar Driven Task-Prompting for Unified Perception in Autonomous Driving

no code implementations • CVPR 2023 • Xiwen Liang, Minzhe Niu, Jianhua Han, Hang Xu, Chunjing Xu, Xiaodan Liang

Multi-task learning has emerged as a powerful paradigm to solve a range of tasks simultaneously with good efficiency in both computation resources and inference time.

Actional Atomic-Concept Learning for Demystifying Vision-Language Navigation

no code implementations • 13 Feb 2023 • Bingqian Lin, Yi Zhu, Xiaodan Liang, Liang Lin, Jianzhuang Liu

Vision-Language Navigation (VLN) is a challenging task which requires an agent to align complex visual observations to language instructions to reach the goal position.

ViewCo: Discovering Text-Supervised Segmentation Masks via Multi-View Semantic Consistency

1 code implementation • 31 Jan 2023 • Pengzhen Ren, Changlin Li, Hang Xu, Yi Zhu, Guangrun Wang, Jianzhuang Liu, Xiaojun Chang, Xiaodan Liang

Specifically, we first propose text-to-views consistency modeling to learn correspondence for multiple views of the same input image.

CLIP2: Contrastive Language-Image-Point Pretraining From Real-World Point Cloud Data

no code implementations • CVPR 2023 • Yihan Zeng, Chenhan Jiang, Jiageng Mao, Jianhua Han, Chaoqiang Ye, Qingqiu Huang, Dit-yan Yeung, Zhen Yang, Xiaodan Liang, Hang Xu

Contrastive Language-Image Pre-training, benefiting from large-scale unlabeled text-image pairs, has demonstrated great performance in open-world vision understanding tasks.

Learning To Segment Every Referring Object Point by Point

1 code implementation • CVPR 2023 • Mengxue Qu, Yu Wu, Yunchao Wei, Wu Liu, Xiaodan Liang, Yao Zhao

Extensive experiments show that our model achieves 52. 06% in terms of accuracy (versus 58. 93% in fully supervised setting) on RefCOCO+@testA, when only using 1% of the mask annotations.

CTP:Towards Vision-Language Continual Pretraining via Compatible Momentum Contrast and Topology Preservation

1 code implementation • ICCV 2023 • Hongguang Zhu, Yunchao Wei, Xiaodan Liang, Chunjie Zhang, Yao Zhao

Regarding the growing nature of real-world data, such an offline training paradigm on ever-expanding data is unsustainable, because models lack the continual learning ability to accumulate knowledge constantly.

NLIP: Noise-robust Language-Image Pre-training

no code implementations • 14 Dec 2022 • Runhui Huang, Yanxin Long, Jianhua Han, Hang Xu, Xiwen Liang, Chunjing Xu, Xiaodan Liang

Large-scale cross-modal pre-training paradigms have recently shown ubiquitous success on a wide range of downstream tasks, e. g., zero-shot classification, retrieval and image captioning.

UniGeo: Unifying Geometry Logical Reasoning via Reformulating Mathematical Expression

1 code implementation • 6 Dec 2022 • Jiaqi Chen, Tong Li, Jinghui Qin, Pan Lu, Liang Lin, Chongyu Chen, Xiaodan Liang

Naturally, we also present a unified multi-task Geometric Transformer framework, Geoformer, to tackle calculation and proving problems simultaneously in the form of sequence generation, which finally shows the reasoning ability can be improved on both two tasks by unifying formulation.

Ranked #3 on

Mathematical Reasoning

on PGPS9K

Ranked #3 on

Mathematical Reasoning

on PGPS9K

CoupAlign: Coupling Word-Pixel with Sentence-Mask Alignments for Referring Image Segmentation

no code implementations • 4 Dec 2022 • ZiCheng Zhang, Yi Zhu, Jianzhuang Liu, Xiaodan Liang, Wei Ke

Then in the Sentence-Mask Alignment (SMA) module, the masks are weighted by the sentence embedding to localize the referred object, and finally projected back to aggregate the pixels for the target.

3D-TOGO: Towards Text-Guided Cross-Category 3D Object Generation

no code implementations • 2 Dec 2022 • Zutao Jiang, Guansong Lu, Xiaodan Liang, Jihua Zhu, Wei zhang, Xiaojun Chang, Hang Xu

Here, we make the first attempt to achieve generic text-guided cross-category 3D object generation via a new 3D-TOGO model, which integrates a text-to-views generation module and a views-to-3D generation module.

Towards Hard-pose Virtual Try-on via 3D-aware Global Correspondence Learning

1 code implementation • 25 Nov 2022 • Zaiyu Huang, Hanhui Li, Zhenyu Xie, Michael Kampffmeyer, Qingling Cai, Xiaodan Liang

Existing methods are restricted in this setting as they estimate garment warping flows mainly based on 2D poses and appearance, which omits the geometric prior of the 3D human body shape.

Structure-Preserving 3D Garment Modeling with Neural Sewing Machines

no code implementations • 12 Nov 2022 • Xipeng Chen, Guangrun Wang, Dizhong Zhu, Xiaodan Liang, Philip H. S. Torr, Liang Lin

In this paper, we propose a novel Neural Sewing Machine (NSM), a learning-based framework for structure-preserving 3D garment modeling, which is capable of learning representations for garments with diverse shapes and topologies and is successfully applied to 3D garment reconstruction and controllable manipulation.

Fine-grained Visual-Text Prompt-Driven Self-Training for Open-Vocabulary Object Detection

no code implementations • 2 Nov 2022 • Yanxin Long, Jianhua Han, Runhui Huang, Xu Hang, Yi Zhu, Chunjing Xu, Xiaodan Liang

Inspired by the success of vision-language methods (VLMs) in zero-shot classification, recent works attempt to extend this line of work into object detection by leveraging the localization ability of pre-trained VLMs and generating pseudo labels for unseen classes in a self-training manner.

MetaLogic: Logical Reasoning Explanations with Fine-Grained Structure

3 code implementations • 22 Oct 2022 • Yinya Huang, Hongming Zhang, Ruixin Hong, Xiaodan Liang, ChangShui Zhang, Dong Yu

To this end, we propose a comprehensive logical reasoning explanation form.

Learning Self-Regularized Adversarial Views for Self-Supervised Vision Transformers

1 code implementation • 16 Oct 2022 • Tao Tang, Changlin Li, Guangrun Wang, Kaicheng Yu, Xiaojun Chang, Xiaodan Liang

Despite the success, its development and application on self-supervised vision transformers have been hindered by several barriers, including the high search cost, the lack of supervision, and the unsuitable search space.

MARLlib: A Scalable and Efficient Multi-agent Reinforcement Learning Library

1 code implementation • 11 Oct 2022 • Siyi Hu, Yifan Zhong, Minquan Gao, Weixun Wang, Hao Dong, Xiaodan Liang, Zhihui Li, Xiaojun Chang, Yaodong Yang

A significant challenge facing researchers in the area of multi-agent reinforcement learning (MARL) pertains to the identification of a library that can offer fast and compatible development for multi-agent tasks and algorithm combinations, while obviating the need to consider compatibility issues.

Multi-agent Reinforcement Learning

Multi-agent Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

Improving Multi-turn Emotional Support Dialogue Generation with Lookahead Strategy Planning

1 code implementation • 9 Oct 2022 • Yi Cheng, Wenge Liu, Wenjie Li, Jiashuo Wang, Ruihui Zhao, Bang Liu, Xiaodan Liang, Yefeng Zheng

Providing Emotional Support (ES) to soothe people in emotional distress is an essential capability in social interactions.

DetCLIP: Dictionary-Enriched Visual-Concept Paralleled Pre-training for Open-world Detection

no code implementations • 20 Sep 2022 • Lewei Yao, Jianhua Han, Youpeng Wen, Xiaodan Liang, Dan Xu, Wei zhang, Zhenguo Li, Chunjing Xu, Hang Xu

We further design a concept dictionary~(with descriptions) from various online sources and detection datasets to provide prior knowledge for each concept.

Effective Adaptation in Multi-Task Co-Training for Unified Autonomous Driving

no code implementations • 19 Sep 2022 • Xiwen Liang, Yangxin Wu, Jianhua Han, Hang Xu, Chunjing Xu, Xiaodan Liang

Aiming towards a holistic understanding of multiple downstream tasks simultaneously, there is a need for extracting features with better transferability.

ARMANI: Part-level Garment-Text Alignment for Unified Cross-Modal Fashion Design

no code implementations • 11 Aug 2022 • Xujie Zhang, Yu Sha, Michael C. Kampffmeyer, Zhenyu Xie, Zequn Jie, Chengwen Huang, Jianqing Peng, Xiaodan Liang

ARMANI discretizes an image into uniform tokens based on a learned cross-modal codebook in its first stage and uses a Transformer to model the distribution of image tokens for a real image given the tokens of the control signals in its second stage.

Composable Text Controls in Latent Space with ODEs

1 code implementation • 1 Aug 2022 • Guangyi Liu, Zeyu Feng, Yuan Gao, Zichao Yang, Xiaodan Liang, Junwei Bao, Xiaodong He, Shuguang Cui, Zhen Li, Zhiting Hu

This paper proposes a new efficient approach for composable text operations in the compact latent space of text.

Ranked #2 on

Unsupervised Text Style Transfer

on Yelp

Ranked #2 on

Unsupervised Text Style Transfer

on Yelp

SiRi: A Simple Selective Retraining Mechanism for Transformer-based Visual Grounding

1 code implementation • 27 Jul 2022 • Mengxue Qu, Yu Wu, Wu Liu, Qiqi Gong, Xiaodan Liang, Olga Russakovsky, Yao Zhao, Yunchao Wei

Particularly, SiRi conveys a significant principle to the research of visual grounding, i. e., a better initialized vision-language encoder would help the model converge to a better local minimum, advancing the performance accordingly.

PASTA-GAN++: A Versatile Framework for High-Resolution Unpaired Virtual Try-on

no code implementations • 27 Jul 2022 • Zhenyu Xie, Zaiyu Huang, Fuwei Zhao, Haoye Dong, Michael Kampffmeyer, Xin Dong, Feida Zhu, Xiaodan Liang

In this work, we take a step forwards to explore versatile virtual try-on solutions, which we argue should possess three main properties, namely, they should support unsupervised training, arbitrary garment categories, and controllable garment editing.

Open-world Semantic Segmentation via Contrasting and Clustering Vision-Language Embedding

no code implementations • 18 Jul 2022 • Quande Liu, Youpeng Wen, Jianhua Han, Chunjing Xu, Hang Xu, Xiaodan Liang

To bridge the gap between supervised semantic segmentation and real-world applications that acquires one model to recognize arbitrary new concepts, recent zero-shot segmentation attracts a lot of attention by exploring the relationships between unseen and seen object categories, yet requiring large amounts of densely-annotated data with diverse base classes.

Discourse-Aware Graph Networks for Textual Logical Reasoning

no code implementations • 4 Jul 2022 • Yinya Huang, Lemao Liu, Kun Xu, Meng Fang, Liang Lin, Xiaodan Liang

In this work, we propose logic structural-constraint modeling to solve the logical reasoning QA and introduce discourse-aware graph networks (DAGNs).

Entity-Graph Enhanced Cross-Modal Pretraining for Instance-level Product Retrieval

no code implementations • 17 Jun 2022 • Xiao Dong, Xunlin Zhan, Yunchao Wei, XiaoYong Wei, YaoWei Wang, Minlong Lu, Xiaochun Cao, Xiaodan Liang

Our goal in this research is to study a more realistic environment in which we can conduct weakly-supervised multi-modal instance-level product retrieval for fine-grained product categories.

Cross-modal Clinical Graph Transformer for Ophthalmic Report Generation

no code implementations • CVPR 2022 • Mingjie Li, Wenjia Cai, Karin Verspoor, Shirui Pan, Xiaodan Liang, Xiaojun Chang

To endow models with the capability of incorporating expert knowledge, we propose a Cross-modal clinical Graph Transformer (CGT) for ophthalmic report generation (ORG), in which clinical relation triples are injected into the visual features as prior knowledge to drive the decoding procedure.

Policy Diagnosis via Measuring Role Diversity in Cooperative Multi-agent RL

no code implementations • 1 Jun 2022 • Siyi Hu, Chuanlong Xie, Xiaodan Liang, Xiaojun Chang

In this study, we quantify the agent's behavior difference and build its relationship with the policy performance via {\bf Role Diversity}, a metric to measure the characteristics of MARL tasks.

ADAPT: Vision-Language Navigation with Modality-Aligned Action Prompts

no code implementations • CVPR 2022 • Bingqian Lin, Yi Zhu, Zicong Chen, Xiwen Liang, Jianzhuang Liu, Xiaodan Liang

Vision-Language Navigation (VLN) is a challenging task that requires an embodied agent to perform action-level modality alignment, i. e., make instruction-asked actions sequentially in complex visual environments.

Benchmarking the Robustness of LiDAR-Camera Fusion for 3D Object Detection

1 code implementation • 30 May 2022 • Kaicheng Yu, Tang Tao, Hongwei Xie, Zhiwei Lin, Zhongwei Wu, Zhongyu Xia, TingTing Liang, Haiyang Sun, Jiong Deng, Dayang Hao, Yongtao Wang, Xiaodan Liang, Bing Wang

There are two critical sensors for 3D perception in autonomous driving, the camera and the LiDAR.

Self-Guided Noise-Free Data Generation for Efficient Zero-Shot Learning

2 code implementations • 25 May 2022 • Jiahui Gao, Renjie Pi, Yong Lin, Hang Xu, Jiacheng Ye, Zhiyong Wu, Weizhong Zhang, Xiaodan Liang, Zhenguo Li, Lingpeng Kong

In this paradigm, the synthesized data from the PLM acts as the carrier of knowledge, which is used to train a task-specific model with orders of magnitude fewer parameters than the PLM, achieving both higher performance and efficiency than prompt-based zero-shot learning methods on PLMs.

Knowledge Distillation via the Target-aware Transformer

2 code implementations • CVPR 2022 • Sihao Lin, Hongwei Xie, Bing Wang, Kaicheng Yu, Xiaojun Chang, Xiaodan Liang, Gang Wang

To this end, we propose a novel one-to-all spatial matching knowledge distillation approach.

LogicSolver: Towards Interpretable Math Word Problem Solving with Logical Prompt-enhanced Learning

2 code implementations • 17 May 2022 • Zhicheng Yang, Jinghui Qin, Jiaqi Chen, Liang Lin, Xiaodan Liang

To address this issue and make a step towards interpretable MWP solving, we first construct a high-quality MWP dataset named InterMWP which consists of 11, 495 MWPs and annotates interpretable logical formulas based on algebraic knowledge as the grounded linguistic logic of each solution equation.

Unbiased Math Word Problems Benchmark for Mitigating Solving Bias

2 code implementations • Findings (NAACL) 2022 • Zhicheng Yang, Jinghui Qin, Jiaqi Chen, Xiaodan Liang

However, current solvers exist solving bias which consists of data bias and learning bias due to biased dataset and improper training strategy.

Continual Object Detection via Prototypical Task Correlation Guided Gating Mechanism

1 code implementation • CVPR 2022 • BinBin Yang, Xinchi Deng, Han Shi, Changlin Li, Gengwei Zhang, Hang Xu, Shen Zhao, Liang Lin, Xiaodan Liang

To make ROSETTA automatically determine which experience is available and useful, a prototypical task correlation guided Gating Diversity Controller(GDC) is introduced to adaptively adjust the diversity of gates for the new task based on class-specific prototypes.

"My nose is running.""Are you also coughing?": Building A Medical Diagnosis Agent with Interpretable Inquiry Logics

1 code implementation • 29 Apr 2022 • Wenge Liu, Yi Cheng, Hao Wang, Jianheng Tang, Yafei Liu, Ruihui Zhao, Wenjie Li, Yefeng Zheng, Xiaodan Liang

In this paper, we explore how to bring interpretability to data-driven DSMD.

Arch-Graph: Acyclic Architecture Relation Predictor for Task-Transferable Neural Architecture Search

1 code implementation • CVPR 2022 • Minbin Huang, Zhijian Huang, Changlin Li, Xin Chen, Hang Xu, Zhenguo Li, Xiaodan Liang

It is able to find top 0. 16\% and 0. 29\% architectures on average on two search spaces under the budget of only 50 models.

Dressing in the Wild by Watching Dance Videos

no code implementations • CVPR 2022 • Xin Dong, Fuwei Zhao, Zhenyu Xie, Xijin Zhang, Daniel K. Du, Min Zheng, Xiang Long, Xiaodan Liang, Jianchao Yang

While significant progress has been made in garment transfer, one of the most applicable directions of human-centric image generation, existing works overlook the in-the-wild imagery, presenting severe garment-person misalignment as well as noticeable degradation in fine texture details.

Automated Progressive Learning for Efficient Training of Vision Transformers

1 code implementation • CVPR 2022 • Changlin Li, Bohan Zhuang, Guangrun Wang, Xiaodan Liang, Xiaojun Chang, Yi Yang

First, we develop a strong manual baseline for progressive learning of ViTs, by introducing momentum growth (MoGrow) to bridge the gap brought by model growth.

Beyond Fixation: Dynamic Window Visual Transformer

1 code implementation • CVPR 2022 • Pengzhen Ren, Changlin Li, Guangrun Wang, Yun Xiao, Qing Du, Xiaodan Liang, Xiaojun Chang

Recently, a surge of interest in visual transformers is to reduce the computational cost by limiting the calculation of self-attention to a local window.

Laneformer: Object-aware Row-Column Transformers for Lane Detection

no code implementations • 18 Mar 2022 • Jianhua Han, Xiajun Deng, Xinyue Cai, Zhen Yang, Hang Xu, Chunjing Xu, Xiaodan Liang

We present Laneformer, a conceptually simple yet powerful transformer-based architecture tailored for lane detection that is a long-standing research topic for visual perception in autonomous driving.

elBERto: Self-supervised Commonsense Learning for Question Answering

no code implementations • 17 Mar 2022 • Xunlin Zhan, Yuan Li, Xiao Dong, Xiaodan Liang, Zhiting Hu, Lawrence Carin

Commonsense question answering requires reasoning about everyday situations and causes and effects implicit in context.

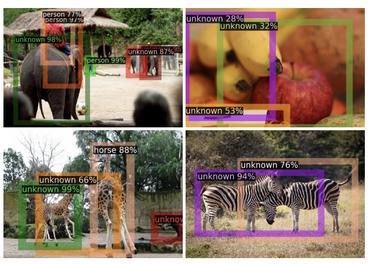

CODA: A Real-World Road Corner Case Dataset for Object Detection in Autonomous Driving

no code implementations • 15 Mar 2022 • Kaican Li, Kai Chen, Haoyu Wang, Lanqing Hong, Chaoqiang Ye, Jianhua Han, Yukuai Chen, Wei zhang, Chunjing Xu, Dit-yan Yeung, Xiaodan Liang, Zhenguo Li, Hang Xu

One main reason that impedes the development of truly reliably self-driving systems is the lack of public datasets for evaluating the performance of object detectors on corner cases.

Visual-Language Navigation Pretraining via Prompt-based Environmental Self-exploration

1 code implementation • ACL 2022 • Xiwen Liang, Fengda Zhu, Lingling Li, Hang Xu, Xiaodan Liang

To improve the ability of fast cross-domain adaptation, we propose Prompt-based Environmental Self-exploration (ProbES), which can self-explore the environments by sampling trajectories and automatically generates structured instructions via a large-scale cross-modal pretrained model (CLIP).

Modern Augmented Reality: Applications, Trends, and Future Directions

no code implementations • 18 Feb 2022 • Shervin Minaee, Xiaodan Liang, Shuicheng Yan

Augmented reality (AR) is one of the relatively old, yet trending areas in the intersection of computer vision and computer graphics with numerous applications in several areas, from gaming and entertainment, to education and healthcare.

Revisiting Over-smoothing in BERT from the Perspective of Graph

no code implementations • ICLR 2022 • Han Shi, Jiahui Gao, Hang Xu, Xiaodan Liang, Zhenguo Li, Lingpeng Kong, Stephen M. S. Lee, James T. Kwok

Recently over-smoothing phenomenon of Transformer-based models is observed in both vision and language fields.

Wukong: A 100 Million Large-scale Chinese Cross-modal Pre-training Benchmark

1 code implementation • 14 Feb 2022 • Jiaxi Gu, Xiaojun Meng, Guansong Lu, Lu Hou, Minzhe Niu, Xiaodan Liang, Lewei Yao, Runhui Huang, Wei zhang, Xin Jiang, Chunjing Xu, Hang Xu

Experiments show that Wukong can serve as a promising Chinese pre-training dataset and benchmark for different cross-modal learning methods.

Ranked #6 on

Image Retrieval

on MUGE Retrieval

Ranked #6 on

Image Retrieval

on MUGE Retrieval

Exploring Inter-Channel Correlation for Diversity-preserved KnowledgeDistillation

1 code implementation • 8 Feb 2022 • Li Liu, Qingle Huang, Sihao Lin, Hongwei Xie, Bing Wang, Xiaojun Chang, Xiaodan Liang

Extensive experiments on two vision tasks, includ-ing ImageNet classification and Pascal VOC segmentation, demonstrate the superiority of our ICKD, which consis-tently outperforms many existing methods, advancing thestate-of-the-art in the fields of Knowledge Distillation.

BodyGAN: General-Purpose Controllable Neural Human Body Generation

no code implementations • CVPR 2022 • Chaojie Yang, Hanhui Li, Shengjie Wu, Shengkai Zhang, Haonan Yan, Nianhong Jiao, Jie Tang, Runnan Zhou, Xiaodan Liang, Tianxiang Zheng

This is because current methods mainly rely on a single pose/appearance model, which is limited in disentangling various poses and appearance in human images.

Contrastive Instruction-Trajectory Learning for Vision-Language Navigation

1 code implementation • 8 Dec 2021 • Xiwen Liang, Fengda Zhu, Yi Zhu, Bingqian Lin, Bing Wang, Xiaodan Liang

The vision-language navigation (VLN) task requires an agent to reach a target with the guidance of natural language instruction.

Towards Scalable Unpaired Virtual Try-On via Patch-Routed Spatially-Adaptive GAN

1 code implementation • NeurIPS 2021 • Zhenyu Xie, Zaiyu Huang, Fuwei Zhao, Haoye Dong, Michael Kampffmeyer, Xiaodan Liang

Image-based virtual try-on is one of the most promising applications of human-centric image generation due to its tremendous real-world potential.

FILIP: Fine-grained Interactive Language-Image Pre-Training

1 code implementation • ICLR 2022 • Lewei Yao, Runhui Huang, Lu Hou, Guansong Lu, Minzhe Niu, Hang Xu, Xiaodan Liang, Zhenguo Li, Xin Jiang, Chunjing Xu

In this paper, we introduce a large-scale Fine-grained Interactive Language-Image Pre-training (FILIP) to achieve finer-level alignment through a cross-modal late interaction mechanism, which uses a token-wise maximum similarity between visual and textual tokens to guide the contrastive objective.

UltraPose: Synthesizing Dense Pose with 1 Billion Points by Human-body Decoupling 3D Model

1 code implementation • ICCV 2021 • Haonan Yan, Jiaqi Chen, Xujie Zhang, Shengkai Zhang, Nianhong Jiao, Xiaodan Liang, Tianxiang Zheng

However, the popular DensePose-COCO dataset relies on a sophisticated manual annotation system, leading to severe limitations in acquiring the denser and more accurate annotated pose resources.

Image Comes Dancing with Collaborative Parsing-Flow Video Synthesis

no code implementations • 27 Oct 2021 • Bowen Wu, Zhenyu Xie, Xiaodan Liang, Yubei Xiao, Haoye Dong, Liang Lin

The integration of human parsing and appearance flow effectively guides the generation of video frames with realistic appearance.

IconQA: A New Benchmark for Abstract Diagram Understanding and Visual Language Reasoning

1 code implementation • 25 Oct 2021 • Pan Lu, Liang Qiu, Jiaqi Chen, Tony Xia, Yizhou Zhao, Wei zhang, Zhou Yu, Xiaodan Liang, Song-Chun Zhu

Also, we develop a strong IconQA baseline Patch-TRM that applies a pyramid cross-modal Transformer with input diagram embeddings pre-trained on the icon dataset.

![]() Ranked #1 on

Visual Question Answering (VQA)

on IconQA

Ranked #1 on

Visual Question Answering (VQA)

on IconQA

Role Diversity Matters: A Study of Cooperative Training Strategies for Multi-Agent RL

no code implementations • 29 Sep 2021 • Siyi Hu, Chuanlong Xie, Xiaodan Liang, Xiaojun Chang

In addition, role diversity can help to find a better training strategy and increase performance in cooperative MARL.

DS-Net++: Dynamic Weight Slicing for Efficient Inference in CNNs and Transformers

1 code implementation • 21 Sep 2021 • Changlin Li, Guangrun Wang, Bing Wang, Xiaodan Liang, Zhihui Li, Xiaojun Chang

Dynamic networks have shown their promising capability in reducing theoretical computation complexity by adapting their architectures to the input during inference.

Wav-BERT: Cooperative Acoustic and Linguistic Representation Learning for Low-Resource Speech Recognition

no code implementations • Findings (EMNLP) 2021 • Guolin Zheng, Yubei Xiao, Ke Gong, Pan Zhou, Xiaodan Liang, Liang Lin

Specifically, we unify a pre-trained acoustic model (wav2vec 2. 0) and a language model (BERT) into an end-to-end trainable framework.

EfficientBERT: Progressively Searching Multilayer Perceptron via Warm-up Knowledge Distillation

1 code implementation • Findings (EMNLP) 2021 • Chenhe Dong, Guangrun Wang, Hang Xu, Jiefeng Peng, Xiaozhe Ren, Xiaodan Liang

In this paper, we have a critical insight that improving the feed-forward network (FFN) in BERT has a higher gain than improving the multi-head attention (MHA) since the computational cost of FFN is 2$\sim$3 times larger than MHA.

M5Product: Self-harmonized Contrastive Learning for E-commercial Multi-modal Pretraining

no code implementations • CVPR 2022 • Xiao Dong, Xunlin Zhan, Yangxin Wu, Yunchao Wei, Michael C. Kampffmeyer, XiaoYong Wei, Minlong Lu, YaoWei Wang, Xiaodan Liang

Despite the potential of multi-modal pre-training to learn highly discriminative feature representations from complementary data modalities, current progress is being slowed by the lack of large-scale modality-diverse datasets.

Pyramid R-CNN: Towards Better Performance and Adaptability for 3D Object Detection

1 code implementation • ICCV 2021 • Jiageng Mao, Minzhe Niu, Haoyue Bai, Xiaodan Liang, Hang Xu, Chunjing Xu

To resolve the problems, we propose a novel second-stage module, named pyramid RoI head, to adaptively learn the features from the sparse points of interest.

Ranked #2 on

3D Object Detection

on waymo vehicle

(AP metric)

Ranked #2 on

3D Object Detection

on waymo vehicle

(AP metric)

Voxel Transformer for 3D Object Detection

1 code implementation • ICCV 2021 • Jiageng Mao, Yujing Xue, Minzhe Niu, Haoyue Bai, Jiashi Feng, Xiaodan Liang, Hang Xu, Chunjing Xu

We present Voxel Transformer (VoTr), a novel and effective voxel-based Transformer backbone for 3D object detection from point clouds.

Ranked #3 on

3D Object Detection

on waymo vehicle

(L1 mAP metric)

Ranked #3 on

3D Object Detection

on waymo vehicle

(L1 mAP metric)

Pi-NAS: Improving Neural Architecture Search by Reducing Supernet Training Consistency Shift

1 code implementation • ICCV 2021 • Jiefeng Peng, Jiqi Zhang, Changlin Li, Guangrun Wang, Xiaodan Liang, Liang Lin

We attribute this ranking correlation problem to the supernet training consistency shift, including feature shift and parameter shift.

FFA-IR: Towards an Explainable and Reliable Medical Report Generation Benchmark

1 code implementation • Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2) 2021 • Mingjie Li, Wenjia Cai, Rui Liu, Yuetian Weng, Xiaoyun Zhao, Cong Wang, Xin Chen, Zhong Liu, Caineng Pan, Mengke Li, Yizhi Liu, Flora D Salim, Karin Verspoor, Xiaodan Liang, Xiaojun Chang

Researchers have explored advanced methods from computer vision and natural language processing to incorporate medical domain knowledge for the generation of readable medical reports.

M3D-VTON: A Monocular-to-3D Virtual Try-On Network

1 code implementation • ICCV 2021 • Fuwei Zhao, Zhenyu Xie, Michael Kampffmeyer, Haoye Dong, Songfang Han, Tianxiang Zheng, Tao Zhang, Xiaodan Liang

Virtual 3D try-on can provide an intuitive and realistic view for online shopping and has a huge potential commercial value.

Medical-VLBERT: Medical Visual Language BERT for COVID-19 CT Report Generation With Alternate Learning

no code implementations • 11 Aug 2021 • Guangyi Liu, Yinghong Liao, Fuyu Wang, Bin Zhang, Lu Zhang, Xiaodan Liang, Xiang Wan, Shaolin Li, Zhen Li, Shuixing Zhang, Shuguang Cui

Medical imaging technologies, including computed tomography (CT) or chest X-Ray (CXR), are largely employed to facilitate the diagnosis of the COVID-19.

NASOA: Towards Faster Task-oriented Online Fine-tuning with a Zoo of Models

no code implementations • ICCV 2021 • Hang Xu, Ning Kang, Gengwei Zhang, Chuanlong Xie, Xiaodan Liang, Zhenguo Li

Fine-tuning from pre-trained ImageNet models has been a simple, effective, and popular approach for various computer vision tasks.

WAS-VTON: Warping Architecture Search for Virtual Try-on Network

no code implementations • 1 Aug 2021 • Zhenyu Xie, Xujie Zhang, Fuwei Zhao, Haoye Dong, Michael C. Kampffmeyer, Haonan Yan, Xiaodan Liang

Despite recent progress on image-based virtual try-on, current methods are constraint by shared warping networks and thus fail to synthesize natural try-on results when faced with clothing categories that require different warping operations.

Product1M: Towards Weakly Supervised Instance-Level Product Retrieval via Cross-modal Pretraining

1 code implementation • ICCV 2021 • Xunlin Zhan, Yangxin Wu, Xiao Dong, Yunchao Wei, Minlong Lu, Yichi Zhang, Hang Xu, Xiaodan Liang

In this paper, we investigate a more realistic setting that aims to perform weakly-supervised multi-modal instance-level product retrieval among fine-grained product categories.

Adversarial Reinforced Instruction Attacker for Robust Vision-Language Navigation

1 code implementation • 23 Jul 2021 • Bingqian Lin, Yi Zhu, Yanxin Long, Xiaodan Liang, Qixiang Ye, Liang Lin

Specifically, we propose a Dynamic Reinforced Instruction Attacker (DR-Attacker), which learns to mislead the navigator to move to the wrong target by destroying the most instructive information in instructions at different timesteps.

AutoBERT-Zero: Evolving BERT Backbone from Scratch

no code implementations • 15 Jul 2021 • Jiahui Gao, Hang Xu, Han Shi, Xiaozhe Ren, Philip L. H. Yu, Xiaodan Liang, Xin Jiang, Zhenguo Li

Transformer-based pre-trained language models like BERT and its variants have recently achieved promising performance in various natural language processing (NLP) tasks.

Ranked #10 on

Semantic Textual Similarity

on MRPC

Ranked #10 on

Semantic Textual Similarity

on MRPC

Deep Learning for Embodied Vision Navigation: A Survey

no code implementations • 7 Jul 2021 • Fengda Zhu, Yi Zhu, Vincent CS Lee, Xiaodan Liang, Xiaojun Chang

A navigation agent is supposed to have various intelligent skills, such as visual perceiving, mapping, planning, exploring and reasoning, etc.

Neural-Symbolic Solver for Math Word Problems with Auxiliary Tasks

1 code implementation • ACL 2021 • Jinghui Qin, Xiaodan Liang, Yining Hong, Jianheng Tang, Liang Lin

Previous math word problem solvers following the encoder-decoder paradigm fail to explicitly incorporate essential math symbolic constraints, leading to unexplainable and unreasonable predictions.

Don't Take It Literally: An Edit-Invariant Sequence Loss for Text Generation

1 code implementation • 29 Jun 2021 • Guangyi Liu, Zichao Yang, Tianhua Tao, Xiaodan Liang, Junwei Bao, Zhen Li, Xiaodong He, Shuguang Cui, Zhiting Hu

Such training objective is sub-optimal when the target sequence is not perfect, e. g., when the target sequence is corrupted with noises, or when only weak sequence supervision is available.

One Million Scenes for Autonomous Driving: ONCE Dataset

1 code implementation • 21 Jun 2021 • Jiageng Mao, Minzhe Niu, Chenhan Jiang, Hanxue Liang, Jingheng Chen, Xiaodan Liang, Yamin Li, Chaoqiang Ye, Wei zhang, Zhenguo Li, Jie Yu, Hang Xu, Chunjing Xu

To facilitate future research on exploiting unlabeled data for 3D detection, we additionally provide a benchmark in which we reproduce and evaluate a variety of self-supervised and semi-supervised methods on the ONCE dataset.

SODA10M: A Large-Scale 2D Self/Semi-Supervised Object Detection Dataset for Autonomous Driving

no code implementations • 21 Jun 2021 • Jianhua Han, Xiwen Liang, Hang Xu, Kai Chen, Lanqing Hong, Jiageng Mao, Chaoqiang Ye, Wei zhang, Zhenguo Li, Xiaodan Liang, Chunjing Xu

Experiments show that SODA10M can serve as a promising pre-training dataset for different self-supervised learning methods, which gives superior performance when fine-tuning with different downstream tasks (i. e., detection, semantic/instance segmentation) in autonomous driving domain.

Prototypical Graph Contrastive Learning

1 code implementation • 17 Jun 2021 • Shuai Lin, Pan Zhou, Zi-Yuan Hu, Shuojia Wang, Ruihui Zhao, Yefeng Zheng, Liang Lin, Eric Xing, Xiaodan Liang

However, since for a query, its negatives are uniformly sampled from all graphs, existing methods suffer from the critical sampling bias issue, i. e., the negatives likely having the same semantic structure with the query, leading to performance degradation.

Vision-Language Navigation with Random Environmental Mixup

1 code implementation • ICCV 2021 • Chong Liu, Fengda Zhu, Xiaojun Chang, Xiaodan Liang, ZongYuan Ge, Yi-Dong Shen

Then, we cross-connect the key views of different scenes to construct augmented scenes.

Ranked #38 on

Vision and Language Navigation

on VLN Challenge

Ranked #38 on

Vision and Language Navigation

on VLN Challenge

Towards Quantifiable Dialogue Coherence Evaluation

1 code implementation • ACL 2021 • Zheng Ye, Liucun Lu, Lishan Huang, Liang Lin, Xiaodan Liang

To address these limitations, we propose Quantifiable Dialogue Coherence Evaluation (QuantiDCE), a novel framework aiming to train a quantifiable dialogue coherence metric that can reflect the actual human rating standards.

GeoQA: A Geometric Question Answering Benchmark Towards Multimodal Numerical Reasoning

1 code implementation • Findings (ACL) 2021 • Jiaqi Chen, Jianheng Tang, Jinghui Qin, Xiaodan Liang, Lingbo Liu, Eric P. Xing, Liang Lin

Therefore, we propose a Geometric Question Answering dataset GeoQA, containing 4, 998 geometric problems with corresponding annotated programs, which illustrate the solving process of the given problems.

Ranked #4 on

Mathematical Reasoning

on PGPS9K

Ranked #4 on

Mathematical Reasoning

on PGPS9K

TransNAS-Bench-101: Improving Transferability and Generalizability of Cross-Task Neural Architecture Search

2 code implementations • CVPR 2021 • Yawen Duan, Xin Chen, Hang Xu, Zewei Chen, Xiaodan Liang, Tong Zhang, Zhenguo Li

While existing NAS methods mostly design architectures on a single task, algorithms that look beyond single-task search are surging to pursue a more efficient and universal solution across various tasks.

Inter-GPS: Interpretable Geometry Problem Solving with Formal Language and Symbolic Reasoning

1 code implementation • ACL 2021 • Pan Lu, Ran Gong, Shibiao Jiang, Liang Qiu, Siyuan Huang, Xiaodan Liang, Song-Chun Zhu

We further propose a novel geometry solving approach with formal language and symbolic reasoning, called Interpretable Geometry Problem Solver (Inter-GPS).

Ranked #1 on

Mathematical Question Answering

on GeoS

Ranked #1 on

Mathematical Question Answering

on GeoS

SOON: Scenario Oriented Object Navigation with Graph-based Exploration

1 code implementation • CVPR 2021 • Fengda Zhu, Xiwen Liang, Yi Zhu, Xiaojun Chang, Xiaodan Liang

In this task, an agent is required to navigate from an arbitrary position in a 3D embodied environment to localize a target following a scene description.

Ranked #5 on

Visual Navigation

on SOON Test

Ranked #5 on

Visual Navigation

on SOON Test

DAGN: Discourse-Aware Graph Network for Logical Reasoning

2 code implementations • NAACL 2021 • Yinya Huang, Meng Fang, Yu Cao, LiWei Wang, Xiaodan Liang

The model encodes discourse information as a graph with elementary discourse units (EDUs) and discourse relations, and learns the discourse-aware features via a graph network for downstream QA tasks.

Ranked #24 on

Reading Comprehension

on ReClor

Ranked #24 on

Reading Comprehension

on ReClor

Dynamic Slimmable Network

1 code implementation • CVPR 2021 • Changlin Li, Guangrun Wang, Bing Wang, Xiaodan Liang, Zhihui Li, Xiaojun Chang

Here, we explore a dynamic network slimming regime, named Dynamic Slimmable Network (DS-Net), which aims to achieve good hardware-efficiency via dynamically adjusting filter numbers of networks at test time with respect to different inputs, while keeping filters stored statically and contiguously in hardware to prevent the extra burden.

BossNAS: Exploring Hybrid CNN-transformers with Block-wisely Self-supervised Neural Architecture Search

1 code implementation • ICCV 2021 • Changlin Li, Tao Tang, Guangrun Wang, Jiefeng Peng, Bing Wang, Xiaodan Liang, Xiaojun Chang

In this work, we present Block-wisely Self-supervised Neural Architecture Search (BossNAS), an unsupervised NAS method that addresses the problem of inaccurate architecture rating caused by large weight-sharing space and biased supervision in previous methods.

A Data-Centric Framework for Composable NLP Workflows

1 code implementation • EMNLP 2020 • Zhengzhong Liu, Guanxiong Ding, Avinash Bukkittu, Mansi Gupta, Pengzhi Gao, Atif Ahmed, Shikun Zhang, Xin Gao, Swapnil Singhavi, Linwei Li, Wei Wei, Zecong Hu, Haoran Shi, Haoying Zhang, Xiaodan Liang, Teruko Mitamura, Eric P. Xing, Zhiting Hu

Empirical natural language processing (NLP) systems in application domains (e. g., healthcare, finance, education) involve interoperation among multiple components, ranging from data ingestion, human annotation, to text retrieval, analysis, generation, and visualization.

SparseBERT: Rethinking the Importance Analysis in Self-attention

1 code implementation • 25 Feb 2021 • Han Shi, Jiahui Gao, Xiaozhe Ren, Hang Xu, Xiaodan Liang, Zhenguo Li, James T. Kwok

A surprising result is that diagonal elements in the attention map are the least important compared with other attention positions.

Loss Function Discovery for Object Detection via Convergence-Simulation Driven Search

1 code implementation • ICLR 2021 • Peidong Liu, Gengwei Zhang, Bochao Wang, Hang Xu, Xiaodan Liang, Yong Jiang, Zhenguo Li

For object detection, the well-established classification and regression loss functions have been carefully designed by considering diverse learning challenges.

GTAE: Graph-Transformer based Auto-Encoders for Linguistic-Constrained Text Style Transfer

no code implementations • 1 Feb 2021 • Yukai Shi, Sen Zhang, Chenxing Zhou, Xiaodan Liang, Xiaojun Yang, Liang Lin

Non-parallel text style transfer has attracted increasing research interests in recent years.

Graphonomy: Universal Image Parsing via Graph Reasoning and Transfer

2 code implementations • 26 Jan 2021 • Liang Lin, Yiming Gao, Ke Gong, Meng Wang, Xiaodan Liang

Prior highly-tuned image parsing models are usually studied in a certain domain with a specific set of semantic labels and can hardly be adapted into other scenarios (e. g., sharing discrepant label granularity) without extensive re-training.

UPDeT: Universal Multi-agent Reinforcement Learning via Policy Decoupling with Transformers

1 code implementation • 20 Jan 2021 • Siyi Hu, Fengda Zhu, Xiaojun Chang, Xiaodan Liang

Recent advances in multi-agent reinforcement learning have been largely limited in training one model from scratch for every new task.

Unifying Relational Sentence Generation and Retrieval for Medical Image Report Composition

no code implementations • 9 Jan 2021 • Fuyu Wang, Xiaodan Liang, Lin Xu, Liang Lin

Beyond generating long and topic-coherent paragraphs in traditional captioning tasks, the medical image report composition task poses more task-oriented challenges by requiring both the highly-accurate medical term diagnosis and multiple heterogeneous forms of information including impression and findings.

TransNAS-Bench-101: Improving Transferrability and Generalizability of Cross-Task Neural Architecture Search

2 code implementations • 1 Jan 2021 • Yawen Duan, Xin Chen, Hang Xu, Zewei Chen, Xiaodan Liang, Tong Zhang, Zhenguo Li

While existing NAS methods mostly design architectures on one single task, algorithms that look beyond single-task search are surging to pursue a more efficient and universal solution across various tasks.

CAT-SAC: Soft Actor-Critic with Curiosity-Aware Entropy Temperature

no code implementations • 1 Jan 2021 • Junfan Lin, Changxin Huang, Xiaodan Liang, Liang Lin

The curiosity is added to the target entropy to increase the entropy temperature for unfamiliar states and decrease the target entropy for familiar states.

NASOA: Towards Faster Task-oriented Online Fine-tuning

no code implementations • 1 Jan 2021 • Hang Xu, Ning Kang, Gengwei Zhang, Xiaodan Liang, Zhenguo Li

The resulting model zoo is more training efficient than SOTA NAS models, e. g. 6x faster than RegNetY-16GF, and 1. 7x faster than EfficientNetB3.

Exploring Geometry-Aware Contrast and Clustering Harmonization for Self-Supervised 3D Object Detection

no code implementations • ICCV 2021 • Hanxue Liang, Chenhan Jiang, Dapeng Feng, Xin Chen, Hang Xu, Xiaodan Liang, Wei zhang, Zhenguo Li, Luc van Gool

Here we present a novel self-supervised 3D Object detection framework that seamlessly integrates the geometry-aware contrast and clustering harmonization to lift the unsupervised 3D representation learning, named GCC-3D.

Linguistically Routing Capsule Network for Out-of-Distribution Visual Question Answering

no code implementations • ICCV 2021 • Qingxing Cao, Wentao Wan, Keze Wang, Xiaodan Liang, Liang Lin

The experimental results show that our proposed method can improve current VQA models on OOD split without losing performance on the in-domain test data.

Exploring Inter-Channel Correlation for Diversity-Preserved Knowledge Distillation

2 code implementations • ICCV 2021 • Li Liu, Qingle Huang, Sihao Lin, Hongwei Xie, Bing Wang, Xiaojun Chang, Xiaodan Liang

Extensive experiments on two vision tasks, including ImageNet classification and Pascal VOC segmentation, demonstrate the superiority of our ICKD, which consistently outperforms many existing methods, advancing the state-of-the-art in the fields of Knowledge Distillation.

Ranked #21 on

Knowledge Distillation

on ImageNet

Ranked #21 on

Knowledge Distillation

on ImageNet

UPDeT: Universal Multi-agent RL via Policy Decoupling with Transformers

no code implementations • ICLR 2021 • Siyi Hu, Fengda Zhu, Xiaojun Chang, Xiaodan Liang

Recent advances in multi-agent reinforcement learning have been largely limited in training one model from scratch for every new task.

Self-Motivated Communication Agent for Real-World Vision-Dialog Navigation

no code implementations • ICCV 2021 • Yi Zhu, Yue Weng, Fengda Zhu, Xiaodan Liang, Qixiang Ye, Yutong Lu, Jianbin Jiao

Vision-Dialog Navigation (VDN) requires an agent to ask questions and navigate following the human responses to find target objects.

Erasure for Advancing: Dynamic Self-Supervised Learning for Commonsense Reasoning

no code implementations • 1 Jan 2021 • Fuyu Wang, Pan Zhou, Xiaodan Liang, Liang Lin

To solve this issue, we propose a novel DynamIc Self-sUperviSed Erasure (DISUSE) which adaptively erases redundant and artifactual clues in the context and questions to learn and establish the correct corresponding pair relations between the questions and their clues.

REM-Net: Recursive Erasure Memory Network for Commonsense Evidence Refinement

no code implementations • 24 Dec 2020 • Yinya Huang, Meng Fang, Xunlin Zhan, Qingxing Cao, Xiaodan Liang, Liang Lin

It is crucial since the quality of the evidence is the key to answering commonsense questions, and even determines the upper bound on the QA systems performance.

Graph-Evolving Meta-Learning for Low-Resource Medical Dialogue Generation

1 code implementation • 22 Dec 2020 • Shuai Lin, Pan Zhou, Xiaodan Liang, Jianheng Tang, Ruihui Zhao, Ziliang Chen, Liang Lin

Besides, we develop a Graph-Evolving Meta-Learning (GEML) framework that learns to evolve the commonsense graph for reasoning disease-symptom correlations in a new disease, which effectively alleviates the needs of a large number of dialogues.

Adversarial Meta Sampling for Multilingual Low-Resource Speech Recognition

no code implementations • 22 Dec 2020 • Yubei Xiao, Ke Gong, Pan Zhou, Guolin Zheng, Xiaodan Liang, Liang Lin

When sampling tasks in MML-ASR, AMS adaptively determines the task sampling probability for each source language.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+3

Automatic Speech Recognition (ASR)

+3

Knowledge-Routed Visual Question Reasoning: Challenges for Deep Representation Embedding

1 code implementation • 14 Dec 2020 • Qingxing Cao, Bailin Li, Xiaodan Liang, Keze Wang, Liang Lin

Specifically, we generate the question-answer pair based on both the Visual Genome scene graph and an external knowledge base with controlled programs to disentangle the knowledge from other biases.

Ada-Segment: Automated Multi-loss Adaptation for Panoptic Segmentation

no code implementations • 7 Dec 2020 • Gengwei Zhang, Yiming Gao, Hang Xu, Hao Zhang, Zhenguo Li, Xiaodan Liang

Panoptic segmentation that unifies instance segmentation and semantic segmentation has recently attracted increasing attention.

Ranked #17 on

Panoptic Segmentation

on COCO test-dev

Ranked #17 on

Panoptic Segmentation

on COCO test-dev

AutoSync: Learning to Synchronize for Data-Parallel Distributed Deep Learning

no code implementations • NeurIPS 2020 • Hao Zhang, Yuan Li, Zhijie Deng, Xiaodan Liang, Lawrence Carin, Eric Xing

Synchronization is a key step in data-parallel distributed machine learning (ML).

Continuous Transition: Improving Sample Efficiency for Continuous Control Problems via MixUp

1 code implementation • 30 Nov 2020 • Junfan Lin, Zhongzhan Huang, Keze Wang, Xiaodan Liang, Weiwei Chen, Liang Lin

Although deep reinforcement learning (RL) has been successfully applied to a variety of robotic control tasks, it's still challenging to apply it to real-world tasks, due to the poor sample efficiency.

Towards Robust Partially Supervised Multi-Structure Medical Image Segmentation on Small-Scale Data

no code implementations • 28 Nov 2020 • Nanqing Dong, Michael Kampffmeyer, Xiaodan Liang, Min Xu, Irina Voiculescu, Eric P. Xing

To bridge the methodological gaps in partially supervised learning (PSL) under data scarcity, we propose Vicinal Labels Under Uncertainty (VLUU), a simple yet efficient framework utilizing the human structure similarity for partially supervised medical image segmentation.

Auto-Panoptic: Cooperative Multi-Component Architecture Search for Panoptic Segmentation

2 code implementations • NeurIPS 2020 • Yangxin Wu, Gengwei Zhang, Hang Xu, Xiaodan Liang, Liang Lin

In this work, we propose an efficient, cooperative and highly automated framework to simultaneously search for all main components including backbone, segmentation branches, and feature fusion module in a unified panoptic segmentation pipeline based on the prevailing one-shot Network Architecture Search (NAS) paradigm.

Towards Interpretable Natural Language Understanding with Explanations as Latent Variables

1 code implementation • NeurIPS 2020 • Wangchunshu Zhou, Jinyi Hu, HANLIN ZHANG, Xiaodan Liang, Maosong Sun, Chenyan Xiong, Jian Tang

In this paper, we develop a general framework for interpretable natural language understanding that requires only a small set of human annotated explanations for training.

Iterative Graph Self-Distillation

no code implementations • 23 Oct 2020 • HANLIN ZHANG, Shuai Lin, Weiyang Liu, Pan Zhou, Jian Tang, Xiaodan Liang, Eric P. Xing

Recently, there has been increasing interest in the challenge of how to discriminatively vectorize graphs.

MedDG: An Entity-Centric Medical Consultation Dataset for Entity-Aware Medical Dialogue Generation

1 code implementation • 15 Oct 2020 • Wenge Liu, Jianheng Tang, Yi Cheng, Wenjie Li, Yefeng Zheng, Xiaodan Liang

To push forward the future research on building expert-sensitive medical dialogue system, we proposes two kinds of medical dialogue tasks based on MedDG dataset.

Semantically-Aligned Universal Tree-Structured Solver for Math Word Problems

1 code implementation • EMNLP 2020 • Jinghui Qin, Lihui Lin, Xiaodan Liang, Rumin Zhang, Liang Lin

A practical automatic textual math word problems (MWPs) solver should be able to solve various textual MWPs while most existing works only focused on one-unknown linear MWPs.

Ranked #10 on

Math Word Problem Solving

on ALG514

Ranked #10 on

Math Word Problem Solving

on ALG514

GRADE: Automatic Graph-Enhanced Coherence Metric for Evaluating Open-Domain Dialogue Systems

1 code implementation • EMNLP 2020 • Lishan Huang, Zheng Ye, Jinghui Qin, Liang Lin, Xiaodan Liang

Capitalized on the topic-level dialogue graph, we propose a new evaluation metric GRADE, which stands for Graph-enhanced Representations for Automatic Dialogue Evaluation.

CurveLane-NAS: Unifying Lane-Sensitive Architecture Search and Adaptive Point Blending

1 code implementation • ECCV 2020 • Hang Xu, Shaoju Wang, Xinyue Cai, Wei zhang, Xiaodan Liang, Zhenguo Li

In this paper, we propose a novel lane-sensitive architecture search framework named CurveLane-NAS to automatically capture both long-ranged coherent and accurate short-range curve information while unifying both architecture search and post-processing on curve lane predictions via point blending.

Ranked #12 on

Lane Detection

on CurveLanes

Ranked #12 on

Lane Detection

on CurveLanes

CATCH: Context-based Meta Reinforcement Learning for Transferrable Architecture Search

no code implementations • ECCV 2020 • Xin Chen, Yawen Duan, Zewei Chen, Hang Xu, Zihao Chen, Xiaodan Liang, Tong Zhang, Zhenguo Li

In spite of its remarkable progress, many algorithms are restricted to particular search spaces.

Ranked #13 on

Neural Architecture Search

on NAS-Bench-201, ImageNet-16-120

(Accuracy (Val) metric)

Ranked #13 on

Neural Architecture Search

on NAS-Bench-201, ImageNet-16-120

(Accuracy (Val) metric)

Auxiliary Signal-Guided Knowledge Encoder-Decoder for Medical Report Generation

2 code implementations • 6 Jun 2020 • Mingjie Li, Fuyu Wang, Xiaojun Chang, Xiaodan Liang

Firstly, the regions of primary interest to radiologists are usually located in a small area of the global image, meaning that the remainder parts of the image could be considered as irrelevant noise in the training procedure.

Bidirectional Graph Reasoning Network for Panoptic Segmentation

no code implementations • CVPR 2020 • Yangxin Wu, Gengwei Zhang, Yiming Gao, Xiajun Deng, Ke Gong, Xiaodan Liang, Liang Lin

We introduce a Bidirectional Graph Reasoning Network (BGRNet), which incorporates graph structure into the conventional panoptic segmentation network to mine the intra-modular and intermodular relations within and between foreground things and background stuff classes.

Linguistically Driven Graph Capsule Network for Visual Question Reasoning

no code implementations • 23 Mar 2020 • Qingxing Cao, Xiaodan Liang, Keze Wang, Liang Lin

Inspired by the property of a capsule network that can carve a tree structure inside a regular convolutional neural network (CNN), we propose a hierarchical compositional reasoning model called the "Linguistically driven Graph Capsule Network", where the compositional process is guided by the linguistic parse tree.

Vision-Dialog Navigation by Exploring Cross-modal Memory

1 code implementation • CVPR 2020 • Yi Zhu, Fengda Zhu, Zhaohuan Zhan, Bingqian Lin, Jianbin Jiao, Xiaojun Chang, Xiaodan Liang

Benefiting from the collaborative learning of the L-mem and the V-mem, our CMN is able to explore the memory about the decision making of historical navigation actions which is for the current step.

Towards Causality-Aware Inferring: A Sequential Discriminative Approach for Medical Diagnosis

1 code implementation • 14 Mar 2020 • Junfan Lin, Keze Wang, Ziliang Chen, Xiaodan Liang, Liang Lin

To eliminate this bias and inspired by the propensity score matching technique with causal diagram, we propose a propensity-based patient simulator to effectively answer unrecorded inquiry by drawing knowledge from the other records; Bias (ii) inherently comes along with the passively collected data, and is one of the key obstacles for training the agent towards "learning how" rather than "remembering what".

ElixirNet: Relation-aware Network Architecture Adaptation for Medical Lesion Detection

no code implementations • 3 Mar 2020 • Chenhan Jiang, Shaoju Wang, Hang Xu, Xiaodan Liang, Nong Xiao

Is a hand-crafted detection network tailored for natural image undoubtedly good enough over a discrepant medical lesion domain?

Universal-RCNN: Universal Object Detector via Transferable Graph R-CNN

no code implementations • 18 Feb 2020 • Hang Xu, Linpu Fang, Xiaodan Liang, Wenxiong Kang, Zhenguo Li

Finally, an InterDomain Transfer Module is proposed to exploit diverse transfer dependencies across all domains and enhance the regional feature representation by attending and transferring semantic contexts globally.

Dynamic Knowledge Routing Network For Target-Guided Open-Domain Conversation

1 code implementation • 4 Feb 2020 • Jinghui Qin, Zheng Ye, Jianheng Tang, Xiaodan Liang

Target-guided open-domain conversation aims to proactively and naturally guide a dialogue agent or human to achieve specific goals, topics or keywords during open-ended conversations.

Blockwisely Supervised Neural Architecture Search with Knowledge Distillation

1 code implementation • 29 Nov 2019 • Changlin Li, Jiefeng Peng, Liuchun Yuan, Guangrun Wang, Xiaodan Liang, Liang Lin, Xiaojun Chang

Moreover, we find that the knowledge of a network model lies not only in the network parameters but also in the network architecture.

Ranked #1 on

Neural Architecture Search

on CIFAR-100

Ranked #1 on

Neural Architecture Search

on CIFAR-100

SM-NAS: Structural-to-Modular Neural Architecture Search for Object Detection

no code implementations • 22 Nov 2019 • Lewei Yao, Hang Xu, Wei zhang, Xiaodan Liang, Zhenguo Li

In this paper, we present a two-stage coarse-to-fine searching strategy named Structural-to-Modular NAS (SM-NAS) for searching a GPU-friendly design of both an efficient combination of modules and better modular-level architecture for object detection.

Vision-Language Navigation with Self-Supervised Auxiliary Reasoning Tasks

no code implementations • CVPR 2020 • Fengda Zhu, Yi Zhu, Xiaojun Chang, Xiaodan Liang

In this paper, we introduce Auxiliary Reasoning Navigation (AuxRN), a framework with four self-supervised auxiliary reasoning tasks to take advantage of the additional training signals derived from the semantic information.

Ranked #13 on

Vision and Language Navigation

on VLN Challenge

Ranked #13 on

Vision and Language Navigation

on VLN Challenge

Heterogeneous Graph Learning for Visual Commonsense Reasoning

1 code implementation • NeurIPS 2019 • Weijiang Yu, Jingwen Zhou, Weihao Yu, Xiaodan Liang, Nong Xiao

Our HGL consists of a primal vision-to-answer heterogeneous graph (VAHG) module and a dual question-to-answer heterogeneous graph (QAHG) module to interactively refine reasoning paths for semantic agreement.

Layout-Graph Reasoning for Fashion Landmark Detection

no code implementations • CVPR 2019 • Weijiang Yu, Xiaodan Liang, Ke Gong, Chenhan Jiang, Nong Xiao, Liang Lin

Each Layout-Graph Reasoning(LGR) layer aims to map feature representations into structural graph nodes via a Map-to-Node module, performs reasoning over structural graph nodes to achieve global layout coherency via a layout-graph reasoning module, and then maps graph nodes back to enhance feature representations via a Node-to-Map module.