Effective Approaches to Batch Parallelization for Dynamic Neural Network Architectures

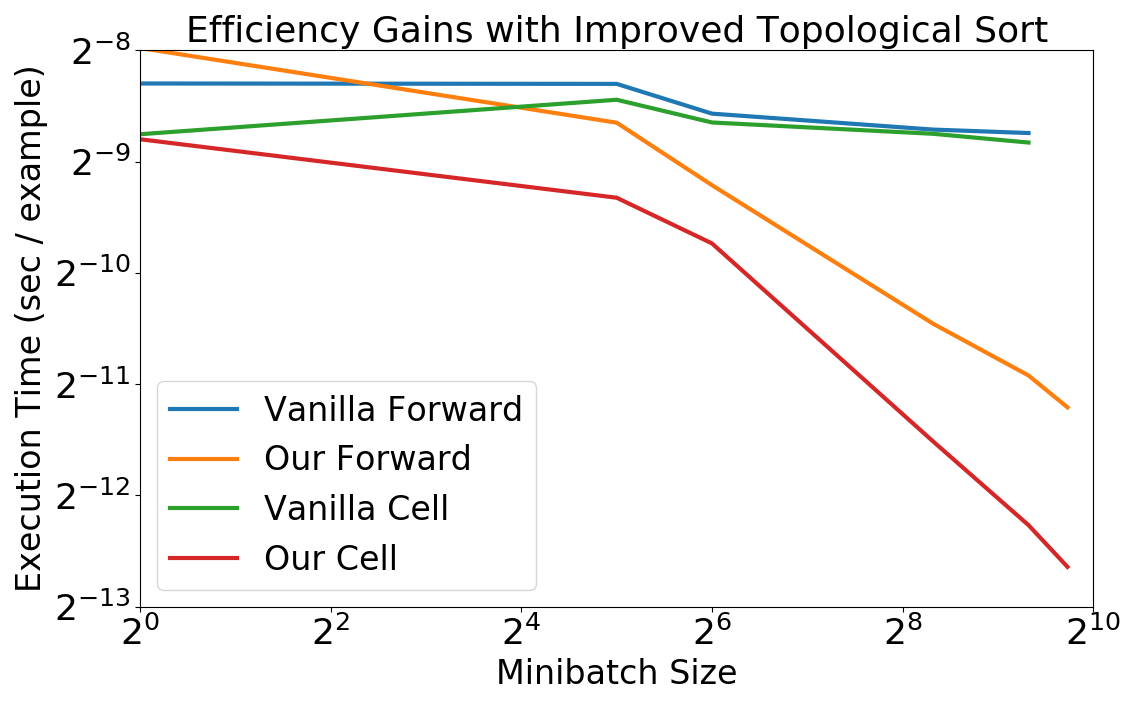

We present a simple dynamic batching approach applicable to a large class of dynamic architectures that consistently yields speedups of over 10x. We provide performance bounds when the architecture is not known a priori and a stronger bound in the special case where the architecture is a predetermined balanced tree. We evaluate our approach on Johnson et al.'s recent visual question answering (VQA) result of his CLEVR dataset by Inferring and Executing Programs (IEP). We also evaluate on sparsely gated mixture of experts layers and achieve speedups of up to 1000x over the naive implementation.

PDF Abstract

CLEVR

CLEVR