GBDT-MO: Gradient Boosted Decision Trees for Multiple Outputs

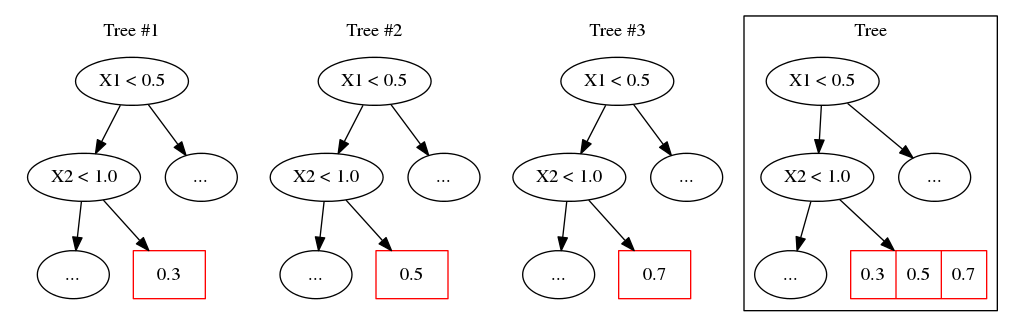

Gradient boosted decision trees (GBDTs) are widely used in machine learning, and the output of current GBDT implementations is a single variable. When there are multiple outputs, GBDT constructs multiple trees corresponding to the output variables. The correlations between variables are ignored by such a strategy causing redundancy of the learned tree structures. In this paper, we propose a general method to learn GBDT for multiple outputs, called GBDT-MO. Each leaf of GBDT-MO constructs predictions of all variables or a subset of automatically selected variables. This is achieved by considering the summation of objective gains over all output variables. Moreover, we extend histogram approximation into multiple output case to speed up the training process. Various experiments on synthetic and real-world datasets verify that GBDT-MO achieves outstanding performance in terms of both accuracy and training speed. Our codes are available on-line.

PDF Abstract

NUS-WIDE

NUS-WIDE