Image-Based CLIP-Guided Essence Transfer

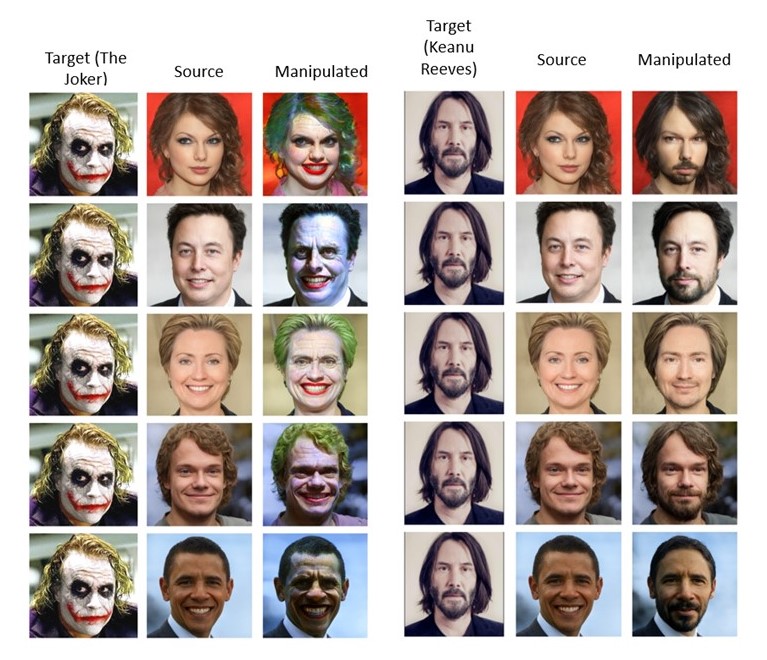

We make the distinction between (i) style transfer, in which a source image is manipulated to match the textures and colors of a target image, and (ii) essence transfer, in which one edits the source image to include high-level semantic attributes from the target. Crucially, the semantic attributes that constitute the essence of an image may differ from image to image. Our blending operator combines the powerful StyleGAN generator and the semantic encoder of CLIP in a novel way that is simultaneously additive in both latent spaces, resulting in a mechanism that guarantees both identity preservation and high-level feature transfer without relying on a facial recognition network. We present two variants of our method. The first is based on optimization, while the second fine-tunes an existing inversion encoder to perform essence extraction. Through extensive experiments, we demonstrate the superiority of our methods for essence transfer over existing methods for style transfer, domain adaptation, and text-based semantic editing. Our code is available at https://github.com/hila-chefer/TargetCLIP.

PDF Abstract

FFHQ

FFHQ