m2mKD: Module-to-Module Knowledge Distillation for Modular Transformers

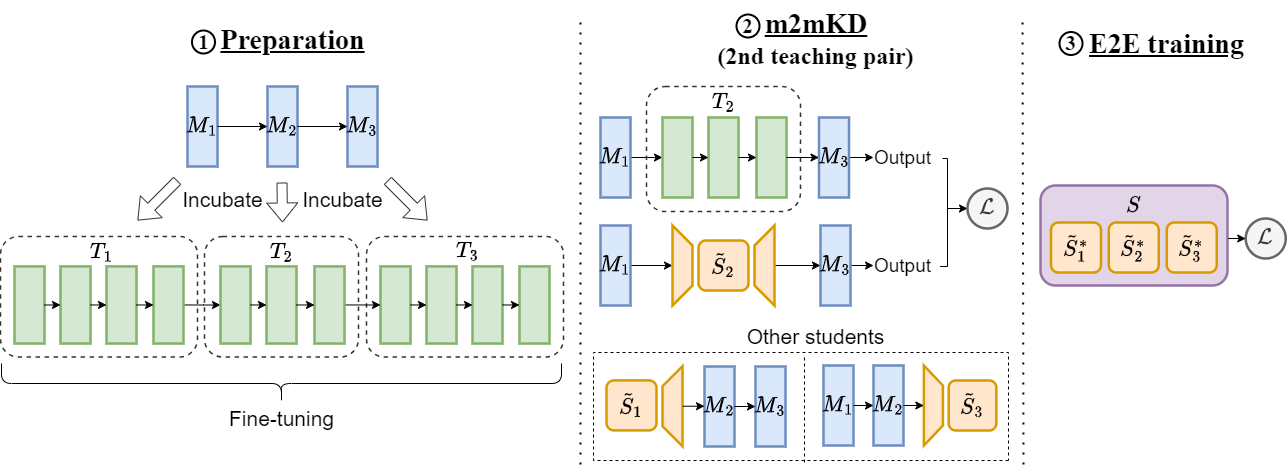

Modular neural architectures are gaining attention for their powerful generalization and efficient adaptation to new domains. However, training these models poses challenges due to optimization difficulties arising from intrinsic sparse connectivity. Leveraging knowledge from monolithic models through techniques like knowledge distillation can facilitate training and enable integration of diverse knowledge. Nevertheless, conventional knowledge distillation approaches are not tailored to modular models and struggle with unique architectures and enormous parameter counts. Motivated by these challenges, we propose module-to-module knowledge distillation (m2mKD) for transferring knowledge between modules. m2mKD combines teacher modules of a pretrained monolithic model and student modules of a modular model with a shared meta model respectively to encourage the student module to mimic the behaviour of the teacher module. We evaluate m2mKD on two modular neural architectures: Neural Attentive Circuits (NACs) and Vision Mixture-of-Experts (V-MoE). Applying m2mKD to NACs yields significant improvements in IID accuracy on Tiny-ImageNet (up to 5.6%) and OOD robustness on Tiny-ImageNet-R (up to 4.2%). Additionally, the V-MoE-Base model trained with m2mKD achieves 3.5% higher accuracy than end-to-end training on ImageNet-1k. Code is available at https://github.com/kamanphoebe/m2mKD.

PDF Abstract

ImageNet

ImageNet

CIFAR-100

CIFAR-100

CUB-200-2011

CUB-200-2011

ImageNet-R

ImageNet-R