Model-to-Circuit Cross-Approximation For Printed Machine Learning Classifiers

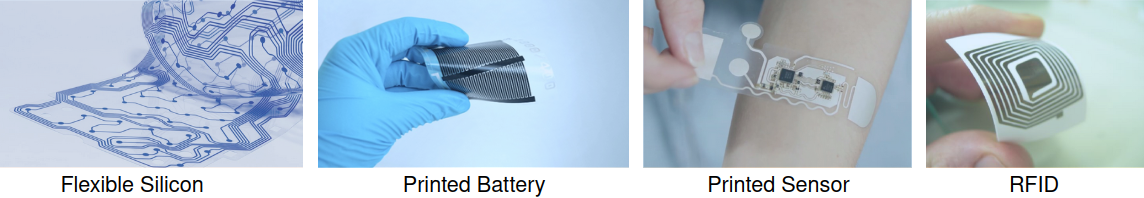

Printed electronics (PE) promises on-demand fabrication, low non-recurring engineering costs, and sub-cent fabrication costs. It also allows for high customization that would be infeasible in silicon, and bespoke architectures prevail to improve the efficiency of emerging PE machine learning (ML) applications. Nevertheless, large feature sizes in PE prohibit the realization of complex ML models in PE, even with bespoke architectures. In this work, we present an automated, cross-layer approximation framework tailored to bespoke architectures that enable complex ML models, such as Multi-Layer Perceptrons (MLPs) and Support Vector Machines (SVMs), in PE. Our framework adopts cooperatively a hardware-driven coefficient approximation of the ML model at algorithmic level, a netlist pruning at logic level, and a voltage over-scaling at the circuit level. Extensive experimental evaluation on 12 MLPs and 12 SVMs and more than 6000 approximate and exact designs demonstrates that our model-to-circuit cross-approximation delivers power and area optimal designs that, compared to the state-of-the-art exact designs, feature on average 51% and 66% area and power reduction, respectively, for less than 5% accuracy loss. Finally, we demonstrate that our framework enables 80% of the examined classifiers to be battery-powered with almost identical accuracy with the exact designs, paving thus the way towards smart complex printed applications.

PDF Abstract