Multi-Pass Q-Networks for Deep Reinforcement Learning with Parameterised Action Spaces

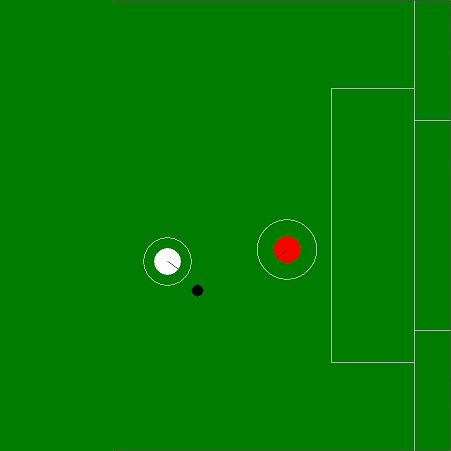

Parameterised actions in reinforcement learning are composed of discrete actions with continuous action-parameters. This provides a framework for solving complex domains that require combining high-level actions with flexible control. The recent P-DQN algorithm extends deep Q-networks to learn over such action spaces. However, it treats all action-parameters as a single joint input to the Q-network, invalidating its theoretical foundations. We analyse the issues with this approach and propose a novel method, multi-pass deep Q-networks, or MP-DQN, to address them. We empirically demonstrate that MP-DQN significantly outperforms P-DQN and other previous algorithms in terms of data efficiency and converged policy performance on the Platform, Robot Soccer Goal, and Half Field Offense domains.

PDF Abstract