Search Results for author: I-Chen Wu

Found 24 papers, 8 papers with code

PPO-Clip Attains Global Optimality: Towards Deeper Understandings of Clipping

no code implementations • 19 Dec 2023 • Nai-Chieh Huang, Ping-Chun Hsieh, Kuo-Hao Ho, I-Chen Wu

Our findings highlight the $O(1/\sqrt{T})$ min-iterate convergence rate specifically in the context of neural function approximation.

Game Solving with Online Fine-Tuning

1 code implementation • NeurIPS 2023 • Ti-Rong Wu, Hung Guei, Ting Han Wei, Chung-Chin Shih, Jui-Te Chin, I-Chen Wu

Solving a game typically means to find the game-theoretic value (outcome given optimal play), and optionally a full strategy to follow in order to achieve that outcome.

Towards Human-Like RL: Taming Non-Naturalistic Behavior in Deep RL via Adaptive Behavioral Costs in 3D Games

no code implementations • 27 Sep 2023 • Kuo-Hao Ho, Ping-Chun Hsieh, Chiu-Chou Lin, You-Ren Luo, Feng-Jian Wang, I-Chen Wu

In this paper, we propose a new approach called Adaptive Behavioral Costs in Reinforcement Learning (ABC-RL) for training a human-like agent with competitive strength.

Residual Scheduling: A New Reinforcement Learning Approach to Solving Job Shop Scheduling Problem

no code implementations • 27 Sep 2023 • Kuo-Hao Ho, Ruei-Yu Jheng, Ji-Han Wu, Fan Chiang, Yen-Chi Chen, Yuan-Yu Wu, I-Chen Wu

Interestingly in our experiments, our approach even reaches zero gap for 49 among 50 JSP instances whose job numbers are more than 150 on 20 machines.

Reinforcement Learning for Picking Cluttered General Objects with Dense Object Descriptors

no code implementations • 20 Apr 2023 • Hoang-Giang Cao, Weihao Zeng, I-Chen Wu

We conduct experiments to demonstrate that our CODs is able to consistently represent seen and unseen cluttered objects, which allowed for the picking policy to robustly pick cluttered general objects.

Learning Sim-to-Real Dense Object Descriptors for Robotic Manipulation

no code implementations • 18 Apr 2023 • Hoang-Giang Cao, Weihao Zeng, I-Chen Wu

In this paper, we present Sim-to-Real Dense Object Nets (SRDONs), a dense object descriptor that not only understands the object via appropriate representation but also maps simulated and real data to a unified feature space with pixel consistency.

A Local-Pattern Related Look-Up Table

no code implementations • 22 Dec 2022 • Chung-Chin Shih, Ting Han Wei, Ti-Rong Wu, I-Chen Wu

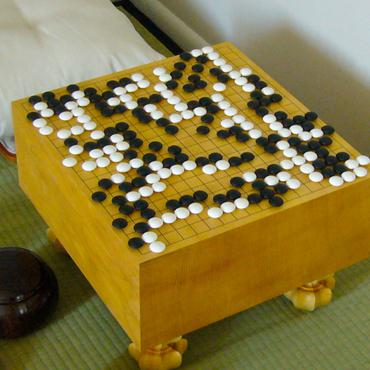

Experiments also show that the use of an RZT instead of a traditional transposition table significantly reduces the number of searched nodes on two data sets of 7x7 and 19x19 L&D Go problems.

Are AlphaZero-like Agents Robust to Adversarial Perturbations?

1 code implementation • 7 Nov 2022 • Li-Cheng Lan, huan zhang, Ti-Rong Wu, Meng-Yu Tsai, I-Chen Wu, Cho-Jui Hsieh

Given that the state space of Go is extremely large and a human player can play the game from any legal state, we ask whether adversarial states exist for Go AIs that may lead them to play surprisingly wrong actions.

A Novel Approach to Solving Goal-Achieving Problems for Board Games

no code implementations • 5 Dec 2021 • Chung-Chin Shih, Ti-Rong Wu, Ting Han Wei, I-Chen Wu

This paper first proposes a novel RZ-based approach, called the RZ-Based Search (RZS), to solving L&D problems for Go.

Optimistic Temporal Difference Learning for 2048

1 code implementation • 22 Nov 2021 • Hung Guei, Lung-Pin Chen, I-Chen Wu

Our experiments show that both TD and TC learning with OI significantly improve the performance.

Neural PPO-Clip Attains Global Optimality: A Hinge Loss Perspective

no code implementations • 26 Oct 2021 • Nai-Chieh Huang, Ping-Chun Hsieh, Kuo-Hao Ho, Hsuan-Yu Yao, Kai-Chun Hu, Liang-Chun Ouyang, I-Chen Wu

Policy optimization is a fundamental principle for designing reinforcement learning algorithms, and one example is the proximal policy optimization algorithm with a clipped surrogate objective (PPO-Clip), which has been popularly used in deep reinforcement learning due to its simplicity and effectiveness.

An Unsupervised Video Game Playstyle Metric via State Discretization

1 code implementation • 3 Oct 2021 • Chiu-Chou Lin, Wei-Chen Chiu, I-Chen Wu

In this paper, we propose the first metric for video game playstyles directly from the game observations and actions, without any prior specification on the playstyle in the target game.

AlphaZero-based Proof Cost Network to Aid Game Solving

1 code implementation • ICLR 2022 • Ti-Rong Wu, Chung-Chin Shih, Ting Han Wei, Meng-Yu Tsai, Wei-Yuan Hsu, I-Chen Wu

We train a Proof Cost Network (PCN), where proof cost is a heuristic that estimates the amount of work required to solve problems.

Learning to Stop: Dynamic Simulation Monte-Carlo Tree Search

no code implementations • 14 Dec 2020 • Li-Cheng Lan, Meng-Yu Tsai, Ti-Rong Wu, I-Chen Wu, Cho-Jui Hsieh

This implies that a significant amount of resources can be saved if we are able to stop the searching earlier when we are confident with the current searching result.

Sim-To-Real Transfer for Miniature Autonomous Car Racing

no code implementations • 11 Nov 2020 • Yeong-Jia Roger Chu, Ting-Han Wei, Jin-Bo Huang, Yuan-Hao Chen, I-Chen Wu

With our method, a model with 18. 4\% completion rate on the testing track is able to help teach a student model with 52\% completion.

Rethinking Deep Policy Gradients via State-Wise Policy Improvement

no code implementations • NeurIPS Workshop ICBINB 2020 • Kai-Chun Hu, Ping-Chun Hsieh, Ting Han Wei, I-Chen Wu

Deep policy gradient is one of the major frameworks in reinforcement learning, and it has been shown to improve parameterized policies across various tasks and environments.

Accelerating and Improving AlphaZero Using Population Based Training

1 code implementation • 13 Mar 2020 • Ti-Rong Wu, Ting-Han Wei, I-Chen Wu

This is compared to a saturated non-PBT agent, which achieves a win rate of 47% against ELF OpenGo under the same circumstances.

Multiple Policy Value Monte Carlo Tree Search

2 code implementations • 31 May 2019 • Li-Cheng Lan, Wei Li, Ting-Han Wei, I-Chen Wu

Many of the strongest game playing programs use a combination of Monte Carlo tree search (MCTS) and deep neural networks (DNN), where the DNNs are used as policy or value evaluators.

Towards Combining On-Off-Policy Methods for Real-World Applications

no code implementations • 24 Apr 2019 • Kai-Chun Hu, Chen-Huan Pi, Ting Han Wei, I-Chen Wu, Stone Cheng, Yi-Wei Dai, Wei-Yuan Ye

In this paper, we point out a fundamental property of the objective in reinforcement learning, with which we can reformulate the policy gradient objective into a perceptron-like loss function, removing the need to distinguish between on and off policy training.

Stochastic Gradient Descent with Hyperbolic-Tangent Decay on Classification

2 code implementations • 5 Jun 2018 • Bo Yang Hsueh, Wei Li, I-Chen Wu

Learning rate scheduler has been a critical issue in the deep neural network training.

Comparison Training for Computer Chinese Chess

no code implementations • 23 Jan 2018 • Wen-Jie Tseng, Jr-Chang Chen, I-Chen Wu, Tinghan Wei

This improved version achieved a win rate of 81. 65% against the trained version without additional features.

Multi-Labelled Value Networks for Computer Go

no code implementations • 30 May 2017 • Ti-Rong Wu, I-Chen Wu, Guan-Wun Chen, Ting-Han Wei, Tung-Yi Lai, Hung-Chun Wu, Li-Cheng Lan

First, the MSE of the ML value network is generally lower than the value network alone.

Multi-Stage Temporal Difference Learning for 2048-like Games

no code implementations • 23 Jun 2016 • Kun-Hao Yeh, I-Chen Wu, Chu-Hsuan Hsueh, Chia-Chuan Chang, Chao-Chin Liang, Han Chiang

After further tuned, our 2048 program reached 32768-tiles with a rate of 31. 75% in 10, 000 games, and one among these games even reached a 65536-tile, which is the first ever reaching a 65536-tile to our knowledge.

Hierarchical Reinforcement Learning

Hierarchical Reinforcement Learning

Playing the Game of 2048

Playing the Game of 2048

Human vs. Computer Go: Review and Prospect

no code implementations • 7 Jun 2016 • Chang-Shing Lee, Mei-Hui Wang, Shi-Jim Yen, Ting-Han Wei, I-Chen Wu, Ping-Chiang Chou, Chun-Hsun Chou, Ming-Wan Wang, Tai-Hsiung Yang

The Google DeepMind challenge match in March 2016 was a historic achievement for computer Go development.