Search Results for author: Poulami Sinhamahapatra

Found 6 papers, 2 papers with code

Finding Dino: A plug-and-play framework for unsupervised detection of out-of-distribution objects using prototypes

no code implementations • 11 Apr 2024 • Poulami Sinhamahapatra, Franziska Schwaiger, Shirsha Bose, Huiyu Wang, Karsten Roscher, Stephan Guennemann

It is an inference-based method that does not require training on the domain dataset and relies on extracting relevant features from self-supervised pre-trained models.

Enhancing Interpretability of Vertebrae Fracture Grading using Human-interpretable Prototypes

no code implementations • 3 Apr 2024 • Poulami Sinhamahapatra, Suprosanna Shit, Anjany Sekuboyina, Malek Husseini, David Schinz, Nicolas Lenhart, Joern Menze, Jan Kirschke, Karsten Roscher, Stephan Guennemann

In this work, we propose a novel interpretable-by-design method, ProtoVerse, to find relevant sub-parts of vertebral fractures (prototypes) that reliably explain the model's decision in a human-understandable way.

Towards Human-Interpretable Prototypes for Visual Assessment of Image Classification Models

no code implementations • 22 Nov 2022 • Poulami Sinhamahapatra, Lena Heidemann, Maureen Monnet, Karsten Roscher

Explaining black-box Artificial Intelligence (AI) models is a cornerstone for trustworthy AI and a prerequisite for its use in safety critical applications such that AI models can reliably assist humans in critical decisions.

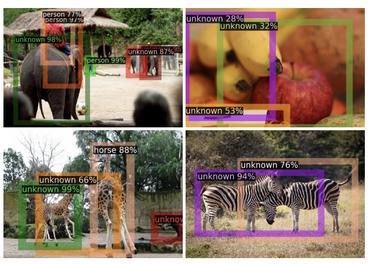

Is it all a cluster game? -- Exploring Out-of-Distribution Detection based on Clustering in the Embedding Space

no code implementations • 16 Mar 2022 • Poulami Sinhamahapatra, Rajat Koner, Karsten Roscher, Stephan Günnemann

It is essential for safety-critical applications of deep neural networks to determine when new inputs are significantly different from the training distribution.

OODformer: Out-Of-Distribution Detection Transformer

1 code implementation • 19 Jul 2021 • Rajat Koner, Poulami Sinhamahapatra, Karsten Roscher, Stephan Günnemann, Volker Tresp

A serious problem in image classification is that a trained model might perform well for input data that originates from the same distribution as the data available for model training, but performs much worse for out-of-distribution (OOD) samples.

Scenes and Surroundings: Scene Graph Generation using Relation Transformer

1 code implementation • 12 Jul 2021 • Rajat Koner, Poulami Sinhamahapatra, Volker Tresp

Identifying objects in an image and their mutual relationships as a scene graph leads to a deep understanding of image content.