Search Results for author: Taesung Park

Found 23 papers, 12 papers with code

Distilling Diffusion Models into Conditional GANs

no code implementations • 9 May 2024 • Minguk Kang, Richard Zhang, Connelly Barnes, Sylvain Paris, Suha Kwak, Jaesik Park, Eli Shechtman, Jun-Yan Zhu, Taesung Park

We propose a method to distill a complex multistep diffusion model into a single-step conditional GAN student model, dramatically accelerating inference, while preserving image quality.

Editable Image Elements for Controllable Synthesis

no code implementations • 24 Apr 2024 • Jiteng Mu, Michaël Gharbi, Richard Zhang, Eli Shechtman, Nuno Vasconcelos, Xiaolong Wang, Taesung Park

In this work, we propose an image representation that promotes spatial editing of input images using a diffusion model.

Lazy Diffusion Transformer for Interactive Image Editing

no code implementations • 18 Apr 2024 • Yotam Nitzan, Zongze Wu, Richard Zhang, Eli Shechtman, Daniel Cohen-Or, Taesung Park, Michaël Gharbi

We demonstrate that our approach is competitive with state-of-the-art inpainting methods in terms of quality and fidelity while providing a 10x speedup for typical user interactions, where the editing mask represents 10% of the image.

VideoGigaGAN: Towards Detail-rich Video Super-Resolution

no code implementations • 18 Apr 2024 • Yiran Xu, Taesung Park, Richard Zhang, Yang Zhou, Eli Shechtman, Feng Liu, Jia-Bin Huang, Difan Liu

We introduce VideoGigaGAN, a new generative VSR model that can produce videos with high-frequency details and temporal consistency.

Customizing Text-to-Image Diffusion with Camera Viewpoint Control

no code implementations • 18 Apr 2024 • Nupur Kumari, Grace Su, Richard Zhang, Taesung Park, Eli Shechtman, Jun-Yan Zhu

Model customization introduces new concepts to existing text-to-image models, enabling the generation of the new concept in novel contexts.

One-Step Image Translation with Text-to-Image Models

1 code implementation • 18 Mar 2024 • Gaurav Parmar, Taesung Park, Srinivasa Narasimhan, Jun-Yan Zhu

In this work, we address two limitations of existing conditional diffusion models: their slow inference speed due to the iterative denoising process and their reliance on paired data for model fine-tuning.

Multi-Scale Semantic Segmentation with Modified MBConv Blocks

no code implementations • 7 Feb 2024 • Xi Chen, Yang Cai, Yuan Wu, Bo Xiong, Taesung Park

Recently, MBConv blocks, initially designed for efficiency in resource-limited settings and later adapted for cutting-edge image classification performances, have demonstrated significant potential in image classification tasks.

Jump Cut Smoothing for Talking Heads

no code implementations • 9 Jan 2024 • Xiaojuan Wang, Taesung Park, Yang Zhou, Eli Shechtman, Richard Zhang

We leverage the appearance of the subject from the other source frames in the video, fusing it with a mid-level representation driven by DensePose keypoints and face landmarks.

One-step Diffusion with Distribution Matching Distillation

1 code implementation • 30 Nov 2023 • Tianwei Yin, Michaël Gharbi, Richard Zhang, Eli Shechtman, Fredo Durand, William T. Freeman, Taesung Park

We introduce Distribution Matching Distillation (DMD), a procedure to transform a diffusion model into a one-step image generator with minimal impact on image quality.

Holistic Evaluation of Text-To-Image Models

1 code implementation • NeurIPS 2023 • Tony Lee, Michihiro Yasunaga, Chenlin Meng, Yifan Mai, Joon Sung Park, Agrim Gupta, Yunzhi Zhang, Deepak Narayanan, Hannah Benita Teufel, Marco Bellagente, Minguk Kang, Taesung Park, Jure Leskovec, Jun-Yan Zhu, Li Fei-Fei, Jiajun Wu, Stefano Ermon, Percy Liang

The stunning qualitative improvement of recent text-to-image models has led to their widespread attention and adoption.

Expressive Text-to-Image Generation with Rich Text

no code implementations • ICCV 2023 • Songwei Ge, Taesung Park, Jun-Yan Zhu, Jia-Bin Huang

For each region, we enforce its text attributes by creating region-specific detailed prompts and applying region-specific guidance, and maintain its fidelity against plain-text generation through region-based injections.

Scaling up GANs for Text-to-Image Synthesis

1 code implementation • CVPR 2023 • Minguk Kang, Jun-Yan Zhu, Richard Zhang, Jaesik Park, Eli Shechtman, Sylvain Paris, Taesung Park

From a technical standpoint, it also marked a drastic change in the favored architecture to design generative image models.

Ranked #18 on

Image Generation

on ImageNet 256x256

Ranked #18 on

Image Generation

on ImageNet 256x256

Domain Expansion of Image Generators

1 code implementation • CVPR 2023 • Yotam Nitzan, Michaël Gharbi, Richard Zhang, Taesung Park, Jun-Yan Zhu, Daniel Cohen-Or, Eli Shechtman

First, we note the generator contains a meaningful, pretrained latent space.

ASSET: Autoregressive Semantic Scene Editing with Transformers at High Resolutions

1 code implementation • 24 May 2022 • Difan Liu, Sandesh Shetty, Tobias Hinz, Matthew Fisher, Richard Zhang, Taesung Park, Evangelos Kalogerakis

We present ASSET, a neural architecture for automatically modifying an input high-resolution image according to a user's edits on its semantic segmentation map.

BlobGAN: Spatially Disentangled Scene Representations

no code implementations • 5 May 2022 • Dave Epstein, Taesung Park, Richard Zhang, Eli Shechtman, Alexei A. Efros

Blobs are differentiably placed onto a feature grid that is decoded into an image by a generative adversarial network.

Contrastive Feature Loss for Image Prediction

1 code implementation • 12 Nov 2021 • Alex Andonian, Taesung Park, Bryan Russell, Phillip Isola, Jun-Yan Zhu, Richard Zhang

Training supervised image synthesis models requires a critic to compare two images: the ground truth to the result.

Mitigating Mode Collapse by Sidestepping Catastrophic Forgetting

no code implementations • 1 Jan 2021 • Karttikeya Mangalam, Rohin Garg, Jathushan Rajasegaran, Taesung Park

Generative Adversarial Networks (GANs) are a class of generative models used for various applications, but they have been known to suffer from the mode collapse problem, in which some modes of the target distribution are ignored by the generator.

A Customizable Dynamic Scenario Modeling and Data Generation Platform for Autonomous Driving

no code implementations • 30 Nov 2020 • Jay Shenoy, Edward Kim, Xiangyu Yue, Taesung Park, Daniel Fremont, Alberto Sangiovanni-Vincentelli, Sanjit Seshia

In this paper, we present a platform to model dynamic and interactive scenarios, generate the scenarios in simulation with different modalities of labeled sensor data, and collect this information for data augmentation.

Contrastive Learning for Unpaired Image-to-Image Translation

10 code implementations • 30 Jul 2020 • Taesung Park, Alexei A. Efros, Richard Zhang, Jun-Yan Zhu

Furthermore, we draw negatives from within the input image itself, rather than from the rest of the dataset.

Swapping Autoencoder for Deep Image Manipulation

4 code implementations • NeurIPS 2020 • Taesung Park, Jun-Yan Zhu, Oliver Wang, Jingwan Lu, Eli Shechtman, Alexei A. Efros, Richard Zhang

Deep generative models have become increasingly effective at producing realistic images from randomly sampled seeds, but using such models for controllable manipulation of existing images remains challenging.

Semantic Image Synthesis with Spatially-Adaptive Normalization

26 code implementations • CVPR 2019 • Taesung Park, Ming-Yu Liu, Ting-Chun Wang, Jun-Yan Zhu

Previous methods directly feed the semantic layout as input to the deep network, which is then processed through stacks of convolution, normalization, and nonlinearity layers.

Ranked #3 on

Sketch-to-Image Translation

on COCO-Stuff

Ranked #3 on

Sketch-to-Image Translation

on COCO-Stuff

CyCADA: Cycle-Consistent Adversarial Domain Adaptation

3 code implementations • ICML 2018 • Judy Hoffman, Eric Tzeng, Taesung Park, Jun-Yan Zhu, Phillip Isola, Kate Saenko, Alexei A. Efros, Trevor Darrell

Domain adaptation is critical for success in new, unseen environments.

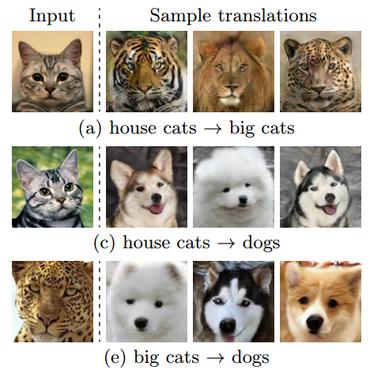

Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks

187 code implementations • ICCV 2017 • Jun-Yan Zhu, Taesung Park, Phillip Isola, Alexei A. Efros

Image-to-image translation is a class of vision and graphics problems where the goal is to learn the mapping between an input image and an output image using a training set of aligned image pairs.

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Multimodal Unsupervised Image-To-Image Translation

Multimodal Unsupervised Image-To-Image Translation

Style Transfer

+2

Style Transfer

+2