Search Results for author: Ti-Rong Wu

Found 9 papers, 5 papers with code

Game Solving with Online Fine-Tuning

1 code implementation • NeurIPS 2023 • Ti-Rong Wu, Hung Guei, Ting Han Wei, Chung-Chin Shih, Jui-Te Chin, I-Chen Wu

Solving a game typically means to find the game-theoretic value (outcome given optimal play), and optionally a full strategy to follow in order to achieve that outcome.

MiniZero: Comparative Analysis of AlphaZero and MuZero on Go, Othello, and Atari Games

1 code implementation • 17 Oct 2023 • Ti-Rong Wu, Hung Guei, Pei-Chiun Peng, Po-Wei Huang, Ting Han Wei, Chung-Chin Shih, Yun-Jui Tsai

This paper presents MiniZero, a zero-knowledge learning framework that supports four state-of-the-art algorithms, including AlphaZero, MuZero, Gumbel AlphaZero, and Gumbel MuZero.

A Local-Pattern Related Look-Up Table

no code implementations • 22 Dec 2022 • Chung-Chin Shih, Ting Han Wei, Ti-Rong Wu, I-Chen Wu

Experiments also show that the use of an RZT instead of a traditional transposition table significantly reduces the number of searched nodes on two data sets of 7x7 and 19x19 L&D Go problems.

Are AlphaZero-like Agents Robust to Adversarial Perturbations?

1 code implementation • 7 Nov 2022 • Li-Cheng Lan, huan zhang, Ti-Rong Wu, Meng-Yu Tsai, I-Chen Wu, Cho-Jui Hsieh

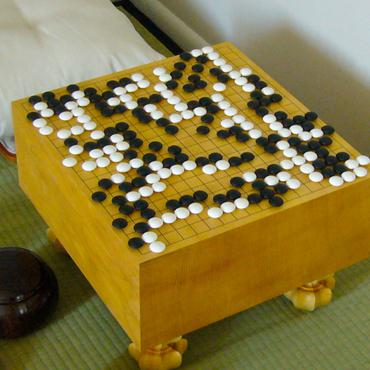

Given that the state space of Go is extremely large and a human player can play the game from any legal state, we ask whether adversarial states exist for Go AIs that may lead them to play surprisingly wrong actions.

A Novel Approach to Solving Goal-Achieving Problems for Board Games

no code implementations • 5 Dec 2021 • Chung-Chin Shih, Ti-Rong Wu, Ting Han Wei, I-Chen Wu

This paper first proposes a novel RZ-based approach, called the RZ-Based Search (RZS), to solving L&D problems for Go.

AlphaZero-based Proof Cost Network to Aid Game Solving

1 code implementation • ICLR 2022 • Ti-Rong Wu, Chung-Chin Shih, Ting Han Wei, Meng-Yu Tsai, Wei-Yuan Hsu, I-Chen Wu

We train a Proof Cost Network (PCN), where proof cost is a heuristic that estimates the amount of work required to solve problems.

Learning to Stop: Dynamic Simulation Monte-Carlo Tree Search

no code implementations • 14 Dec 2020 • Li-Cheng Lan, Meng-Yu Tsai, Ti-Rong Wu, I-Chen Wu, Cho-Jui Hsieh

This implies that a significant amount of resources can be saved if we are able to stop the searching earlier when we are confident with the current searching result.

Accelerating and Improving AlphaZero Using Population Based Training

1 code implementation • 13 Mar 2020 • Ti-Rong Wu, Ting-Han Wei, I-Chen Wu

This is compared to a saturated non-PBT agent, which achieves a win rate of 47% against ELF OpenGo under the same circumstances.

Multi-Labelled Value Networks for Computer Go

no code implementations • 30 May 2017 • Ti-Rong Wu, I-Chen Wu, Guan-Wun Chen, Ting-Han Wei, Tung-Yi Lai, Hung-Chun Wu, Li-Cheng Lan

First, the MSE of the ML value network is generally lower than the value network alone.