Search Results for author: Yu Ding

Found 46 papers, 11 papers with code

Triple GNNs: Introducing Syntactic and Semantic Information for Conversational Aspect-Based Quadruple Sentiment Analysis

1 code implementation • 15 Mar 2024 • Binbin Li, Yuqing Li, Siyu Jia, Bingnan Ma, Yu Ding, Zisen Qi, Xingbang Tan, Menghan Guo, Shenghui Liu

This necessitates a dual focus on both the syntactic information of individual utterances and the semantic interaction among them.

Tracking Dynamic Gaussian Density with a Theoretically Optimal Sliding Window Approach

no code implementations • 11 Mar 2024 • Yinsong Wang, Yu Ding, Shahin Shahrampour

Dynamic density estimation is ubiquitous in many applications, including computer vision and signal processing.

Say Anything with Any Style

no code implementations • 11 Mar 2024 • Shuai Tan, Bin Ji, Yu Ding, Ye Pan

To adapt to different speaking styles, we steer clear of employing a universal network by exploring an elaborate HyperStyle to produce the style-specific weights offset for the style branch.

Towards a Simultaneous and Granular Identity-Expression Control in Personalized Face Generation

no code implementations • 2 Jan 2024 • Renshuai Liu, Bowen Ma, Wei zhang, Zhipeng Hu, Changjie Fan, Tangjie Lv, Yu Ding, Xuan Cheng

We devise a novel diffusion model that can undertake the task of simultaneously face swapping and reenactment.

On the Convergence of Federated Averaging under Partial Participation for Over-parameterized Neural Networks

no code implementations • 9 Oct 2023 • Xin Liu, Wei Li, Dazhi Zhan, Yu Pan, Xin Ma, Yu Ding, Zhisong Pan

Federated learning (FL) is a widely employed distributed paradigm for collaboratively training machine learning models from multiple clients without sharing local data.

A Multi-In and Multi-Out Dendritic Neuron Model and its Optimization

no code implementations • 14 Sep 2023 • Yu Ding, Jun Yu, Chunzhi Gu, Shangce Gao, Chao Zhang

Recently, a novel mathematical ANN model, known as the dendritic neuron model (DNM), has been proposed to address nonlinear problems by more accurately reflecting the structure of real neurons.

The MI-Motion Dataset and Benchmark for 3D Multi-Person Motion Prediction

1 code implementation • 23 Jun 2023 • Xiaogang Peng, Xiao Zhou, Yikai Luo, Hao Wen, Yu Ding, Zizhao Wu

We believe that the proposed MI-Motion benchmark dataset and baseline will facilitate future research in this area, ultimately leading to better understanding and modeling of multi-person interactions.

FlowFace++: Explicit Semantic Flow-supervised End-to-End Face Swapping

no code implementations • 22 Jun 2023 • Yu Zhang, Hao Zeng, Bowen Ma, Wei zhang, Zhimeng Zhang, Yu Ding, Tangjie Lv, Changjie Fan

The discriminator is shape-aware and relies on a semantic flow-guided operation to explicitly calculate the shape discrepancies between the target and source faces, thus optimizing the face swapping network to generate highly realistic results.

Artificial Intelligence/Operations Research Workshop 2 Report Out

no code implementations • 10 Apr 2023 • John Dickerson, Bistra Dilkina, Yu Ding, Swati Gupta, Pascal Van Hentenryck, Sven Koenig, Ramayya Krishnan, Radhika Kulkarni, Catherine Gill, Haley Griffin, Maddy Hunter, Ann Schwartz

This workshop Report Out focuses on the foundational elements of trustworthy AI and OR technology, and how to ensure all AI and OR systems implement these elements in their system designs.

TalkCLIP: Talking Head Generation with Text-Guided Expressive Speaking Styles

no code implementations • 1 Apr 2023 • Yifeng Ma, Suzhen Wang, Yu Ding, Bowen Ma, Tangjie Lv, Changjie Fan, Zhipeng Hu, Zhidong Deng, Xin Yu

In this work, we propose an expression-controllable one-shot talking head method, dubbed TalkCLIP, where the expression in a speech is specified by the natural language.

2D Semantic Segmentation task 3 (25 classes)

2D Semantic Segmentation task 3 (25 classes)

Talking Head Generation

Talking Head Generation

Multi-modal Facial Affective Analysis based on Masked Autoencoder

no code implementations • 20 Mar 2023 • Wei zhang, Bowen Ma, Feng Qiu, Yu Ding

The CVPR 2023 Competition on Affective Behavior Analysis in-the-wild (ABAW) is dedicated to providing high-quality and large-scale Aff-wild2 for the recognition of commonly used emotion representations, such as Action Units (AU), basic expression categories(EXPR), and Valence-Arousal (VA).

DINet: Deformation Inpainting Network for Realistic Face Visually Dubbing on High Resolution Video

1 code implementation • 7 Mar 2023 • Zhimeng Zhang, Zhipeng Hu, Wenjin Deng, Changjie Fan, Tangjie Lv, Yu Ding

Different from previous works relying on multiple up-sample layers to directly generate pixels from latent embeddings, DINet performs spatial deformation on feature maps of reference images to better preserve high-frequency textural details.

Multi-Scale Control Signal-Aware Transformer for Motion Synthesis without Phase

no code implementations • 3 Mar 2023 • Lintao Wang, Kun Hu, Lei Bai, Yu Ding, Wanli Ouyang, Zhiyong Wang

As past poses often contain useful auxiliary hints, in this paper, we propose a task-agnostic deep learning method, namely Multi-scale Control Signal-aware Transformer (MCS-T), with an attention based encoder-decoder architecture to discover the auxiliary information implicitly for synthesizing controllable motion without explicitly requiring auxiliary information such as phase.

Effective Multimodal Reinforcement Learning with Modality Alignment and Importance Enhancement

no code implementations • 18 Feb 2023 • Jinming Ma, Feng Wu, Yingfeng Chen, Xianpeng Ji, Yu Ding

Specifically, we observe that these issues make conventional RL methods difficult to learn a useful state representation in the end-to-end training with multimodal information.

StyleTalk: One-shot Talking Head Generation with Controllable Speaking Styles

1 code implementation • 3 Jan 2023 • Yifeng Ma, Suzhen Wang, Zhipeng Hu, Changjie Fan, Tangjie Lv, Yu Ding, Zhidong Deng, Xin Yu

In a nutshell, we aim to attain a speaking style from an arbitrary reference speaking video and then drive the one-shot portrait to speak with the reference speaking style and another piece of audio.

InterMulti:Multi-view Multimodal Interactions with Text-dominated Hierarchical High-order Fusion for Emotion Analysis

no code implementations • 20 Dec 2022 • Feng Qiu, Wanzeng Kong, Yu Ding

Humans are sophisticated at reading interlocutors' emotions from multimodal signals, such as speech contents, voice tones and facial expressions.

TCFimt: Temporal Counterfactual Forecasting from Individual Multiple Treatment Perspective

no code implementations • 17 Dec 2022 • Pengfei Xi, Guifeng Wang, Zhipeng Hu, Yu Xiong, Mingming Gong, Wei Huang, Runze Wu, Yu Ding, Tangjie Lv, Changjie Fan, Xiangnan Feng

TCFimt constructs adversarial tasks in a seq2seq framework to alleviate selection and time-varying bias and designs a contrastive learning-based block to decouple a mixed treatment effect into separated main treatment effects and causal interactions which further improves estimation accuracy.

EffMulti: Efficiently Modeling Complex Multimodal Interactions for Emotion Analysis

no code implementations • 16 Dec 2022 • Feng Qiu, Chengyang Xie, Yu Ding, Wanzeng Kong

In this paper, we design three kinds of multimodal latent representations to refine the emotion analysis process and capture complex multimodal interactions from different views, including a intact three-modal integrating representation, a modality-shared representation, and three modality-individual representations.

Domain Generalization by Learning and Removing Domain-specific Features

1 code implementation • Advances in Neural Information Processing Systems 2022 • Yu Ding, Lei Wang, Bin Liang, Shuming Liang, Yang Wang, Fang Chen

With the images output by the encoder-decoder network, another classifier is designed to learn the domain-invariant features to conduct image classification.

Ranked #18 on

Domain Generalization

on PACS

Ranked #18 on

Domain Generalization

on PACS

FlowFace: Semantic Flow-guided Shape-aware Face Swapping

no code implementations • 6 Dec 2022 • Hao Zeng, Wei zhang, Changjie Fan, Tangjie Lv, Suzhen Wang, Zhimeng Zhang, Bowen Ma, Lincheng Li, Yu Ding, Xin Yu

Unlike most previous methods that focus on transferring the source inner facial features but neglect facial contours, our FlowFace can transfer both of them to a target face, thus leading to more realistic face swapping.

Facial Action Unit Detection and Intensity Estimation from Self-supervised Representation

no code implementations • 28 Oct 2022 • Bowen Ma, Rudong An, Wei zhang, Yu Ding, Zeng Zhao, Rongsheng Zhang, Tangjie Lv, Changjie Fan, Zhipeng Hu

As a fine-grained and local expression behavior measurement, facial action unit (FAU) analysis (e. g., detection and intensity estimation) has been documented for its time-consuming, labor-intensive, and error-prone annotation.

Global-to-local Expression-aware Embeddings for Facial Action Unit Detection

no code implementations • 27 Oct 2022 • Rudong An, Wei zhang, Hao Zeng, Wei Chen, Zhigang Deng, Yu Ding

Then, AU feature maps and their corresponding AU masks are multiplied to generate AU masked features focusing on local facial region.

Facial Action Units Detection Aided by Global-Local Expression Embedding

no code implementations • 25 Oct 2022 • Zhipeng Hu, Wei zhang, Lincheng Li, Yu Ding, Wei Chen, Zhigang Deng, Xin Yu

We find that AUs and facial expressions are highly associated, and existing facial expression datasets often contain a large number of identities.

Transformer-based Multimodal Information Fusion for Facial Expression Analysis

no code implementations • 23 Mar 2022 • Wei zhang, Feng Qiu, Suzhen Wang, Hao Zeng, Zhimeng Zhang, Rudong An, Bowen Ma, Yu Ding

Then, we introduce a transformer-based fusion module that integrates the static vision features and the dynamic multimodal features.

TAKDE: Temporal Adaptive Kernel Density Estimator for Real-Time Dynamic Density Estimation

no code implementations • 15 Mar 2022 • Yinsong Wang, Yu Ding, Shahin Shahrampour

Kernel density estimation is arguably one of the most commonly used density estimation techniques, and the use of "sliding window" mechanism adapts kernel density estimators to dynamic processes.

One-shot Talking Face Generation from Single-speaker Audio-Visual Correlation Learning

no code implementations • 6 Dec 2021 • Suzhen Wang, Lincheng Li, Yu Ding, Xin Yu

Hence, we propose a novel one-shot talking face generation framework by exploring consistent correlations between audio and visual motions from a specific speaker and then transferring audio-driven motion fields to a reference image.

Towards Futuristic Autonomous Experimentation--A Surprise-Reacting Sequential Experiment Policy

no code implementations • 1 Dec 2021 • Imtiaz Ahmed, Satish Bukkapatnam, Bhaskar Botcha, Yu Ding

An autonomous experimentation platform in manufacturing is supposedly capable of conducting a sequential search for finding suitable manufacturing conditions for advanced materials by itself or even for discovering new materials with minimal human intervention.

Audio2Head: Audio-driven One-shot Talking-head Generation with Natural Head Motion

1 code implementation • 20 Jul 2021 • Suzhen Wang, Lincheng Li, Yu Ding, Changjie Fan, Xin Yu

As this keypoint based representation models the motions of facial regions, head, and backgrounds integrally, our method can better constrain the spatial and temporal consistency of the generated videos.

Prior Aided Streaming Network for Multi-task Affective Recognitionat the 2nd ABAW2 Competition

no code implementations • 8 Jul 2021 • Wei zhang, Zunhu Guo, Keyu Chen, Lincheng Li, Zhimeng Zhang, Yu Ding

Automatic affective recognition has been an important research topic in human computer interaction (HCI) area.

Flow-Guided One-Shot Talking Face Generation With a High-Resolution Audio-Visual Dataset

1 code implementation • CVPR 2021 • Zhimeng Zhang, Lincheng Li, Yu Ding, Changjie Fan

To synthesize high-definition videos, we build a large in-the-wild high-resolution audio-visual dataset and propose a novel flow-guided talking face generation framework.

Learning a Facial Expression Embedding Disentangled From Identity

no code implementations • CVPR 2021 • Wei zhang, Xianpeng Ji, Keyu Chen, Yu Ding, Changjie Fan

The facial expression analysis requires a compact and identity-ignored expression representation.

Learning a Deep Motion Interpolation Network for Human Skeleton Animations

no code implementations • Computer animation & Virtual worlds 2021 • Chi Zhou, Zhangjiong Lai, Suzhen Wang, Lincheng Li, Xiaohan Sun, Yu Ding

In this work, we propose a novel carefully designed deep learning framework, named deep motion interpolation network (DMIN), to learn human movement habits from a real dataset and then to perform the interpolation function specific for human motions.

Cardiac Functional Analysis with Cine MRI via Deep Learning Reconstruction

no code implementations • 17 May 2021 • Eric Z. Chen, Xiao Chen, Jingyuan Lyu, Qi Liu, Zhongqi Zhang, Yu Ding, Shuheng Zhang, Terrence Chen, Jian Xu, Shanhui Sun

To the best of our knowledge, this is the first work to evaluate the cine MRI with deep learning reconstruction for cardiac function analysis and compare it with other conventional methods.

Write-a-speaker: Text-based Emotional and Rhythmic Talking-head Generation

1 code implementation • 16 Apr 2021 • Lincheng Li, Suzhen Wang, Zhimeng Zhang, Yu Ding, Yixing Zheng, Xin Yu, Changjie Fan

To be specific, our framework consists of a speaker-independent stage and a speaker-specific stage.

The temporal overfitting problem with applications in wind power curve modeling

1 code implementation • 2 Dec 2020 • Abhinav Prakash, Rui Tuo, Yu Ding

Using existing model selection methods, like cross validation, results in model overfitting in presence of temporal autocorrelation.

Augmented Equivariant Attention Networks for Microscopy Image Reconstruction

no code implementations • 6 Nov 2020 • Yaochen Xie, Yu Ding, Shuiwang Ji

Advances in deep learning enable us to perform image-to-image transformation tasks for various types of microscopy image reconstruction, computationally producing high-quality images from the physically acquired low-quality ones.

A Spatio-temporal Track Association Algorithm Based on Marine Vessel Automatic Identification System Data

no code implementations • 29 Oct 2020 • Imtiaz Ahmed, Mikyoung Jun, Yu Ding

The proposed approach is developed as an effort to address a data association challenge in which the number of vessels as well as the vessel identification are purposely withheld and time gaps are created in the datasets to mimic the real-life operational complexities under a threat environment.

Graph Regularized Autoencoder and its Application in Unsupervised Anomaly Detection

no code implementations • 29 Oct 2020 • Imtiaz Ahmed, Travis Galoppo, Xia Hu, Yu Ding

In order to make dimensionality reduction effective for high-dimensional data embedding nonlinear low-dimensional manifold, it is understood that some sort of geodesic distance metric should be used to discriminate the data samples.

Gaussian process aided function comparison using noisy scattered data

1 code implementation • 17 Mar 2020 • Abhinav Prakash, Rui Tuo, Yu Ding

This work proposes a new nonparametric method to compare the underlying mean functions given by two noisy datasets.

Methodology Applications

Multi-label Relation Modeling in Facial Action Units Detection

no code implementations • 4 Feb 2020 • Xianpeng Ji, Yu Ding, Lincheng Li, Yu Chen, Changjie Fan

The proposed method consists of the data preprocessing, the feature extraction and the AU classification.

Neighborhood Structure Assisted Non-negative Matrix Factorization and its Application in Unsupervised Point-wise Anomaly Detection

no code implementations • 17 Jan 2020 • Imtiaz Ahmed, Xia Ben Hu, Mithun P. Acharya, Yu Ding

Dimensionality reduction is considered as an important step for ensuring competitive performance in unsupervised learning such as anomaly detection.

Effective Super-Resolution Method for Paired Electron Microscopic Images

no code implementations • 23 Jul 2019 • Yanjun Qian, Jiaxi Xu, Lawrence F. Drummy, Yu Ding

The first difference is that in the electron imaging setting, we have a pair of physical high-resolution and low-resolution images, rather than a physical image with its downsampled counterpart.

Image and Video Processing

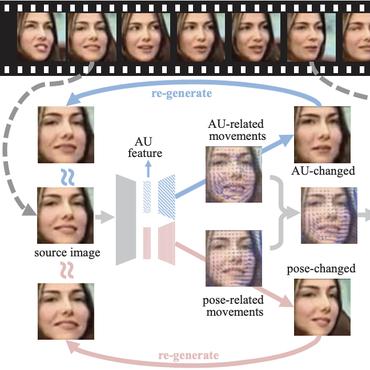

FReeNet: Multi-Identity Face Reenactment

1 code implementation • CVPR 2020 • Jiangning Zhang, Xianfang Zeng, Mengmeng Wang, Yusu Pan, Liang Liu, Yong liu, Yu Ding, Changjie Fan

This paper presents a novel multi-identity face reenactment framework, named FReeNet, to transfer facial expressions from an arbitrary source face to a target face with a shared model.

Analysis of Massive Heterogeneous Temporal-Spatial Data with 3D Self-Organizing Map and Time Vector

no code implementations • 27 Sep 2016 • Yu Ding

Self-organizing map(SOM) have been widely applied in clustering, this paper focused on centroids of clusters and what they reveal.