Search Results for author: Yuezun Li

Found 24 papers, 8 papers with code

FreqBlender: Enhancing DeepFake Detection by Blending Frequency Knowledge

no code implementations • 22 Apr 2024 • Hanzhe Li, Yuezun Li, Jiaran Zhou, Bin Li, Junyu Dong

Existing methods typically generate these faces by blending real or fake faces in color space.

Texture-aware and Shape-guided Transformer for Sequential DeepFake Detection

no code implementations • 22 Apr 2024 • Yunfei Li, Yuezun Li, Xin Wang, Jiaran Zhou, Junyu Dong

In this paper, we propose a novel Texture-aware and Shape-guided Transformer to enhance detection performance.

Multilateral Temporal-view Pyramid Transformer for Video Inpainting Detection

no code implementations • 17 Apr 2024 • Ying Zhang, Yuezun Li, Bo Peng, Jiaran Zhou, Huiyu Zhou, Junyu Dong

The task of video inpainting detection is to expose the pixel-level inpainted regions within a video sequence.

DomainForensics: Exposing Face Forgery across Domains via Bi-directional Adaptation

no code implementations • 17 Dec 2023 • Qingxuan Lv, Yuezun Li, Junyu Dong, Sheng Chen, Hui Yu, Huiyu Zhou, Shu Zhang

Specifically, our strategy considers both forward and backward adaptation, to transfer the forgery knowledge from the source domain to the target domain in forward adaptation and then reverse the adaptation from the target domain to the source domain in backward adaptation.

Contrastive Multi-FaceForensics: An End-to-end Bi-grained Contrastive Learning Approach for Multi-face Forgery Detection

no code implementations • 3 Aug 2023 • Cong Zhang, Honggang Qi, Yuezun Li, Siwei Lyu

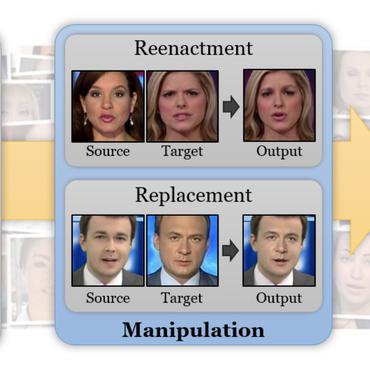

DeepFakes have raised serious societal concerns, leading to a great surge in detection-based forensics methods in recent years.

ForensicsForest Family: A Series of Multi-scale Hierarchical Cascade Forests for Detecting GAN-generated Faces

no code implementations • 2 Aug 2023 • Jiucui Lu, Jiaran Zhou, Junyu Dong, Bin Li, Siwei Lyu, Yuezun Li

The proposed ForensicsForest family is composed of three variants, which are {\em ForensicsForest}, {\em Hybrid ForensicsForest} and {\em Divide-and-Conquer ForensicsForest} respectively.

FakeTracer: Catching Face-swap DeepFakes via Implanting Traces in Training

no code implementations • 27 Jul 2023 • Pu Sun, Honggang Qi, Yuezun Li, Siwei Lyu

In light of these two traces, our method can effectively expose DeepFakes by identifying them.

Enhancing the Transferability via Feature-Momentum Adversarial Attack

1 code implementation • 22 Apr 2022 • Xianglong, Yuezun Li, Haipeng Qu, Junyu Dong

However, the guidance map is fixed in existing methods, which can not consistently reflect the behavior of networks as the image is changed during iteration.

Imperceptible Adversarial Examples for Fake Image Detection

no code implementations • 3 Jun 2021 • Quanyu Liao, Yuezun Li, Xin Wang, Bin Kong, Bin Zhu, Siwei Lyu, Youbing Yin, Qi Song, Xi Wu

Fooling people with highly realistic fake images generated with Deepfake or GANs brings a great social disturbance to our society.

DFGC 2021: A DeepFake Game Competition

1 code implementation • 2 Jun 2021 • Bo Peng, Hongxing Fan, Wei Wang, Jing Dong, Yuezun Li, Siwei Lyu, Qi Li, Zhenan Sun, Han Chen, Baoying Chen, Yanjie Hu, Shenghai Luo, Junrui Huang, Yutong Yao, Boyuan Liu, Hefei Ling, Guosheng Zhang, Zhiliang Xu, Changtao Miao, Changlei Lu, Shan He, Xiaoyan Wu, Wanyi Zhuang

This competition provides a common platform for benchmarking the adversarial game between current state-of-the-art DeepFake creation and detection methods.

DeepFake-o-meter: An Open Platform for DeepFake Detection

no code implementations • 2 Mar 2021 • Yuezun Li, Cong Zhang, Pu Sun, Honggang Qi, Siwei Lyu

In recent years, the advent of deep learning-based techniques and the significant reduction in the cost of computation resulted in the feasibility of creating realistic videos of human faces, commonly known as DeepFakes.

Landmark Breaker: Obstructing DeepFake By Disturbing Landmark Extraction

no code implementations • 1 Feb 2021 • Pu Sun, Yuezun Li, Honggang Qi, Siwei Lyu

In this paper, we describe Landmark Breaker, the first dedicated method to disrupt facial landmark extraction, and apply it to the obstruction of the generation of DeepFake videos. Our motivation is that disrupting the facial landmark extraction can affect the alignment of input face so as to degrade the DeepFake quality.

LandmarkGAN: Synthesizing Faces from Landmarks

1 code implementation • 31 Oct 2020 • Pu Sun, Yuezun Li, Honggang Qi, Siwei Lyu

Face synthesis is an important problem in computer vision with many applications.

Exposing GAN-generated Faces Using Inconsistent Corneal Specular Highlights

1 code implementation • 24 Sep 2020 • Shu Hu, Yuezun Li, Siwei Lyu

We show that such artifacts exist widely in high-quality GAN synthesized faces and further describe an automatic method to extract and compare corneal specular highlights from two eyes.

Fast Portrait Segmentation with Highly Light-weight Network

no code implementations • 19 Oct 2019 • Yuezun Li, Ao Luo, Siwei Lyu

In this paper, we describe a fast and light-weight portrait segmentation method based on a new highly light-weight backbone (HLB) architecture.

Celeb-DF: A Large-scale Challenging Dataset for DeepFake Forensics

7 code implementations • CVPR 2020 • Yuezun Li, Xin Yang, Pu Sun, Honggang Qi, Siwei Lyu

AI-synthesized face-swapping videos, commonly known as DeepFakes, is an emerging problem threatening the trustworthiness of online information.

Hiding Faces in Plain Sight: Disrupting AI Face Synthesis with Adversarial Perturbations

no code implementations • 21 Jun 2019 • Yuezun Li, Xin Yang, Baoyuan Wu, Siwei Lyu

Recent years have seen fast development in synthesizing realistic human faces using AI technologies.

Exposing GAN-synthesized Faces Using Landmark Locations

no code implementations • 30 Mar 2019 • Xin Yang, Yuezun Li, Honggang Qi, Siwei Lyu

Generative adversary networks (GANs) have recently led to highly realistic image synthesis results.

De-identification without losing faces

no code implementations • 12 Feb 2019 • Yuezun Li, Siwei Lyu

In this work, we describe a new face de-identification method that can preserve essential facial attributes in the faces while concealing the identities.

Exposing Deep Fakes Using Inconsistent Head Poses

1 code implementation • 1 Nov 2018 • Xin Yang, Yuezun Li, Siwei Lyu

In this paper, we propose a new method to expose AI-generated fake face images or videos (commonly known as the Deep Fakes).

Exposing DeepFake Videos By Detecting Face Warping Artifacts

3 code implementations • 1 Nov 2018 • Yuezun Li, Siwei Lyu

Compared to previous methods which use a large amount of real and DeepFake generated images to train CNN classifier, our method does not need DeepFake generated images as negative training examples since we target the artifacts in affine face warping as the distinctive feature to distinguish real and fake images.

Exploring the Vulnerability of Single Shot Module in Object Detectors via Imperceptible Background Patches

no code implementations • 16 Sep 2018 • Yuezun Li, Xiao Bian, Ming-Ching Chang, Siwei Lyu

In this paper, we focus on exploring the vulnerability of the Single Shot Module (SSM) commonly used in recent object detectors, by adding small perturbations to patches in the background outside the object.

Robust Adversarial Perturbation on Deep Proposal-based Models

no code implementations • 16 Sep 2018 • Yuezun Li, Daniel Tian, Ming-Ching Chang, Xiao Bian, Siwei Lyu

Adversarial noises are useful tools to probe the weakness of deep learning based computer vision algorithms.

In Ictu Oculi: Exposing AI Generated Fake Face Videos by Detecting Eye Blinking

3 code implementations • 7 Jun 2018 • Yuezun Li, Ming-Ching Chang, Siwei Lyu

The new developments in deep generative networks have significantly improve the quality and efficiency in generating realistically-looking fake face videos.